Critical LMDeploy Vulnerability Exploited Within Hours of Disclosure

A significant security flaw has been identified in LMDeploy, an open-source toolkit designed for compressing, deploying, and serving large language models (LLMs). This vulnerability, cataloged as CVE-2026-33626 with a CVSS score of 7.5, pertains to a Server-Side Request Forgery (SSRF) issue within the toolkit’s vision-language module. Alarmingly, this flaw was actively exploited in the wild less than 13 hours after its public disclosure.

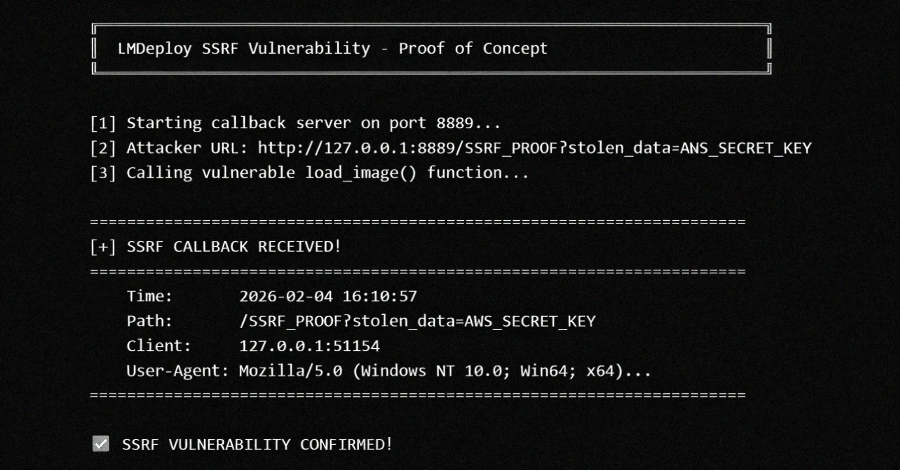

The SSRF vulnerability arises from the `load_image()` function located in `lmdeploy/vl/utils.py`. This function fetches arbitrary URLs without validating internal or private IP addresses, thereby allowing attackers to access cloud metadata services, internal networks, and other sensitive resources. All versions of LMDeploy up to and including 0.12.0 that support vision-language functionalities are affected by this issue. The vulnerability was discovered and reported by Igor Stepansky, a researcher at Orca Security.

Exploiting this vulnerability could enable attackers to steal cloud credentials, access internal services not exposed to the internet, perform port scans on internal networks, and facilitate lateral movement within the network.

Cloud security firm Sysdig reported detecting the first exploitation attempt against its honeypot systems within 12 hours and 31 minutes of the vulnerability’s publication on GitHub. The attack originated from the IP address 103.116.72[.]119. During an eight-minute session, the attacker utilized the vision-language image loader as a generic HTTP SSRF primitive to port-scan the internal network behind the model server. Targets included the AWS Instance Metadata Service (IMDS), Redis, MySQL, a secondary HTTP administrative interface, and an out-of-band (OOB) DNS exfiltration endpoint.

The attack, detected on April 22, 2026, at 03:35 a.m. UTC, involved 10 distinct requests across three phases. The attacker switched between vision language models such as `internlm-xcomposer2` and `OpenGVLab/InternVL2-8B` to likely avoid detection. The phases included targeting AWS IMDS and Redis instances, testing egress with an OOB DNS callback to `requestrepo[.]com` to confirm the SSRF vulnerability’s reach, and port scanning the loopback interface (`127.0.0[.]1`).

This incident underscores the rapid pace at which threat actors exploit newly disclosed vulnerabilities, often before users can apply necessary fixes. Sysdig noted a pattern over the past six months where critical vulnerabilities in AI infrastructure components are weaponized within hours of advisory publications, regardless of their install base size. The advent of Generative AI (GenAI) accelerates this trend, as detailed advisories can serve as prompts for AI models to generate potential exploits.