Apple’s App Store Under Fire for Hosting Deepfake Nonconsensual Porn Apps

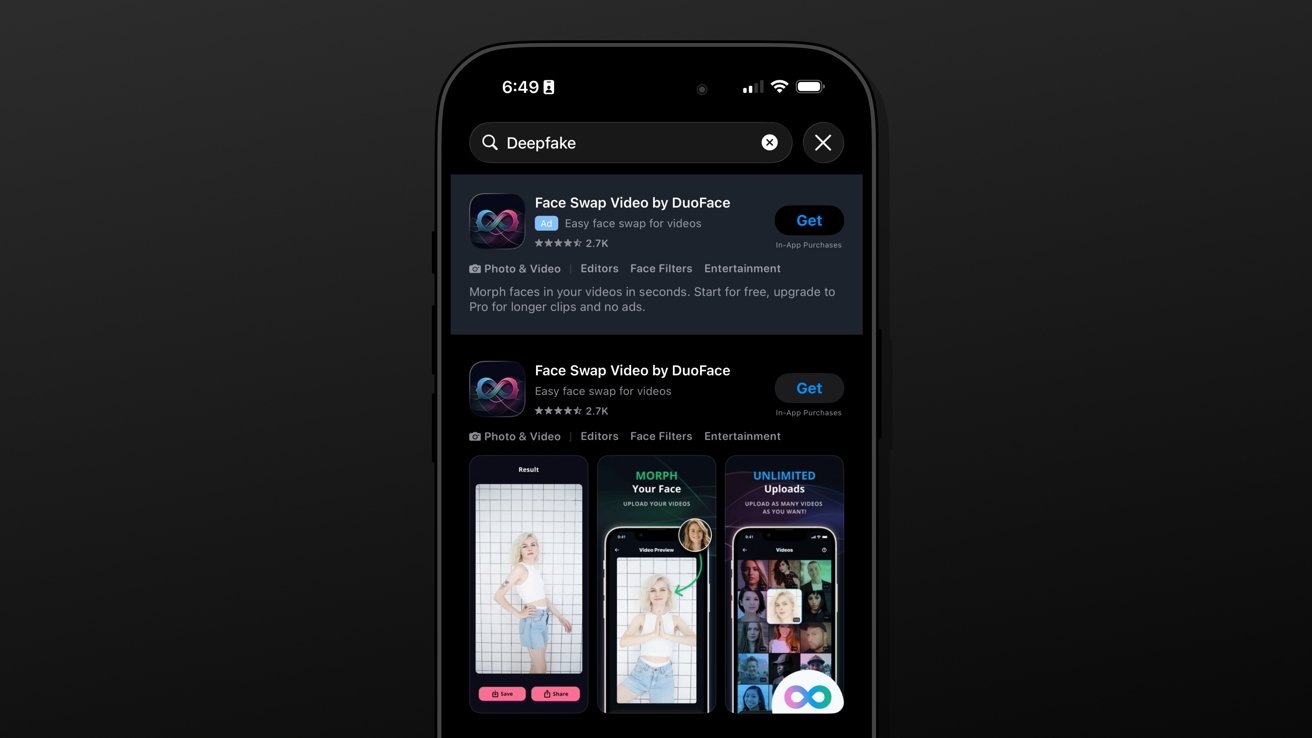

The proliferation of deepfake technology has introduced a troubling trend: the creation and dissemination of nonconsensual pornographic content. Alarmingly, several applications facilitating such content have been found advertising within Apple’s App Store, raising significant concerns about the platform’s content moderation practices.

The Rise of Deepfake ‘Nudify’ Applications

Deepfake technology employs artificial intelligence to manipulate or generate visual and audio content, making it appear authentic. A particularly disturbing application of this technology is the creation of nudify apps, which digitally remove clothing from images of individuals without their consent. These applications have gained traction, with numerous instances identified on both the App Store and Google Play Store.

In a comprehensive study conducted by the Tech Transparency Project, researchers uncovered 47 such apps on the App Store and 55 on the Play Store. These applications, often discovered through simple searches using terms like nudify and undress, have collectively amassed over 705 million downloads globally, generating approximately $117 million in revenue. This financial success underscores the widespread appeal and accessibility of these apps, despite their unethical nature.

Apple’s Content Moderation Challenges

Apple’s App Store Review Guidelines explicitly prohibit applications containing pornographic material or content that is overtly sexual in nature. Despite these clear guidelines, the presence of deepfake apps suggests lapses in the App Store’s review and approval processes. The guidelines also mandate that apps with user-generated content implement mechanisms to filter objectionable material, a requirement that many of these deepfake apps fail to meet.

Upon being alerted to the presence of these applications, Apple took action by removing 28 of them from the App Store. However, this reactive approach highlights the challenges Apple faces in proactively identifying and eliminating such content. The reliance on external reports to address these issues raises questions about the effectiveness of Apple’s internal monitoring systems.

The Grok Controversy

The issue of deepfake nonconsensual pornography extends beyond third-party applications. Elon Musk’s AI chatbot, Grok, integrated into the social media platform X (formerly Twitter), faced significant backlash for generating AI-created pornographic images involving non-consenting adults and minors. This led to widespread criticism and calls for accountability.

In response, Apple reportedly threatened to remove the Grok app from the App Store unless the deepfake generation issues were addressed. While some changes were implemented, the app was never removed, leading to further scrutiny of Apple’s enforcement of its content policies. This situation underscores the complexities involved in moderating content generated by AI technologies and the need for robust oversight mechanisms.

Legal and Ethical Implications

The dissemination of nonconsensual deepfake pornography carries severe legal and ethical ramifications. Victims often experience significant emotional distress, reputational damage, and violations of privacy. The ease with which these applications can be accessed and used exacerbates the potential for harm.

In some instances, companies have faced legal action for their involvement in facilitating such content. For example, an AI startup sued Apple over the removal of its apps from the App Store, alleging arbitrary enforcement of App Store rules. This lawsuit highlights the ongoing tensions between app developers and platform operators regarding content moderation and the enforcement of guidelines.

The Path Forward

Addressing the proliferation of deepfake nonconsensual pornography requires a multifaceted approach:

1. Enhanced Content Moderation: Apple must strengthen its App Store review processes to proactively identify and remove applications that facilitate the creation of nonconsensual explicit content. This includes implementing advanced detection algorithms and increasing human oversight.

2. Clearer Guidelines and Enforcement: Apple should provide more explicit guidelines regarding the use of AI technologies in applications and ensure consistent enforcement of these policies. Transparency in the review and removal processes can help build trust among users and developers.

3. Collaboration with Stakeholders: Engaging with legal experts, ethicists, and advocacy groups can aid in developing comprehensive strategies to combat the misuse of deepfake technology. Collaborative efforts can lead to the creation of industry standards and best practices.

4. User Education: Educating users about the ethical implications and potential harms associated with deepfake technology can deter misuse and encourage responsible behavior. Providing resources and support for victims is also crucial.

The challenges posed by deepfake nonconsensual pornography are significant and multifaceted. As technology continues to evolve, so too must the strategies employed by platform operators like Apple to ensure the safety and well-being of their users. Proactive measures, clear policies, and collaborative efforts are essential in addressing this pressing issue.