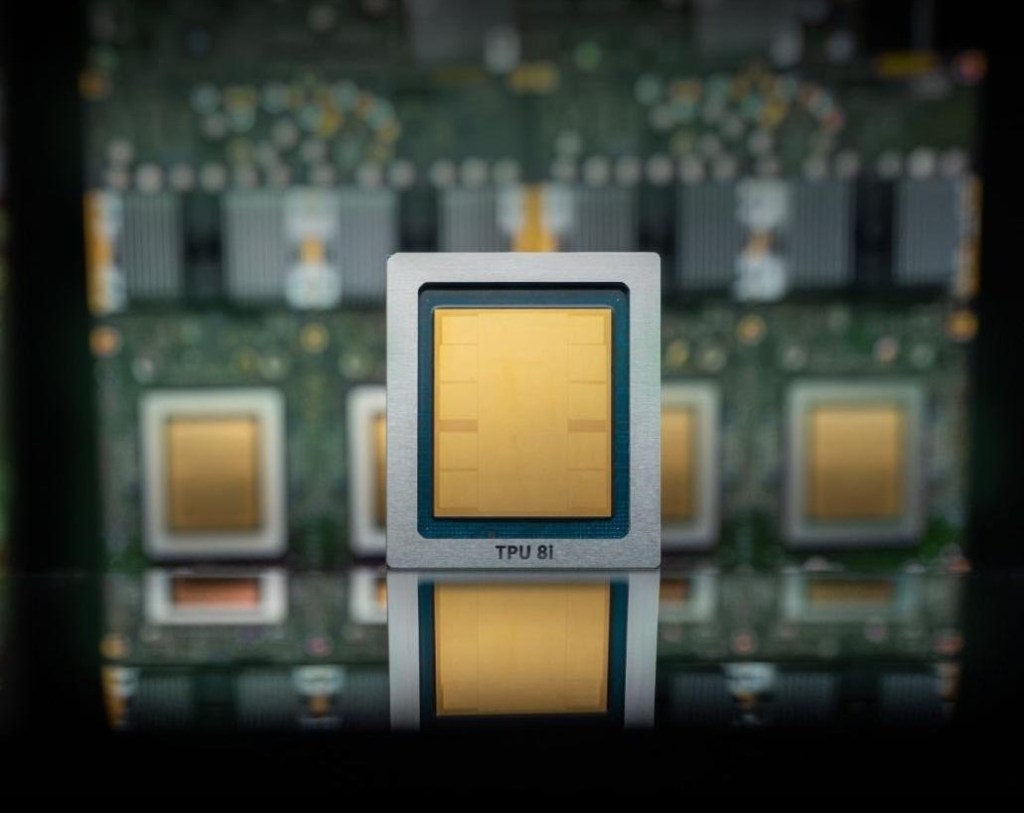

Google Cloud Unveils TPU 8t and TPU 8i to Revolutionize AI Processing

In a significant advancement in artificial intelligence hardware, Google Cloud has introduced its eighth generation of custom-built tensor processing units (TPUs), comprising two distinct chips: the TPU 8t, designed for AI model training, and the TPU 8i, optimized for inference tasks. ([techcrunch.com](https://techcrunch.com/2026/04/22/google-cloud-next-new-tpu-ai-chips-compete-with-nvidia/?utm_source=openai))

Enhanced Performance and Efficiency

The TPU 8t and TPU 8i offer remarkable improvements over their predecessors. Google reports that these new TPUs can accelerate AI model training by up to three times and deliver an 80% enhancement in performance per dollar. Additionally, they support the integration of over one million TPUs into a single cluster, providing substantial computational power while reducing energy consumption and operational costs. ([techcrunch.com](https://techcrunch.com/2026/04/22/google-cloud-next-new-tpu-ai-chips-compete-with-nvidia/?utm_source=openai))

Strategic Positioning in the AI Hardware Landscape

Despite these advancements, Google is not positioning its TPUs as direct competitors to Nvidia’s GPUs. Instead, the company aims to complement existing Nvidia-based systems within its infrastructure. Google has announced plans to incorporate Nvidia’s latest chip, Vera Rubin, into its cloud services later this year, indicating a collaborative approach rather than outright competition. ([techcrunch.com](https://techcrunch.com/2026/04/22/google-cloud-next-new-tpu-ai-chips-compete-with-nvidia/?utm_source=openai))

Industry-Wide Trends in AI Chip Development

The introduction of TPU 8t and TPU 8i reflects a broader industry trend where major cloud providers are developing proprietary AI chips. Companies like Microsoft and Amazon have also ventured into custom AI hardware to enhance their cloud offerings. For instance, Microsoft recently unveiled the Maia 200 chip, designed to scale AI inference tasks efficiently. ([techcrunch.com](https://techcrunch.com/2026/01/26/microsoft-announces-powerful-new-chip-for-ai-inference/?utm_source=openai))

Collaborative Efforts with Nvidia

Google’s relationship with Nvidia remains collaborative. The two companies are working together to enhance computer networking technologies, aiming to improve the efficiency of Nvidia-based systems within Google’s cloud infrastructure. This partnership underscores a shared commitment to advancing AI capabilities through combined expertise. ([techcrunch.com](https://techcrunch.com/2026/04/22/google-cloud-next-new-tpu-ai-chips-compete-with-nvidia/?utm_source=openai))

Implications for the AI Ecosystem

The launch of TPU 8t and TPU 8i signifies Google’s dedication to advancing AI hardware, offering customers more efficient and cost-effective solutions for AI model training and inference. As the demand for AI processing power continues to grow, such innovations are crucial for meeting the computational needs of modern AI applications.