Osaurus: Revolutionizing AI Integration on Mac with Local and Cloud Models

In the rapidly evolving landscape of artificial intelligence, the ability to seamlessly integrate AI models into personal computing environments has become a focal point for innovation. Osaurus, an open-source, Apple-exclusive large language model (LLM) server, is at the forefront of this movement, offering Mac users the flexibility to operate both local and cloud-based AI models while maintaining control over their data and tools.

The Genesis of Osaurus

The inception of Osaurus traces back to Dinoki, a desktop AI companion envisioned as an AI-powered Clippy. Users of Dinoki questioned the necessity of purchasing the application alongside the ongoing costs associated with token usage—a common pricing model in AI services where users pay for processing prompts and generating responses. This feedback prompted Terence Pae, a former software engineer at Tesla and Netflix, to explore the feasibility of running AI models locally on personal devices.

Pae recognized the potential of leveraging the Mac’s capabilities to host AI assistants that could perform tasks such as file browsing, system configuration access, and browser interactions without relying on external servers. This vision materialized into Osaurus, designed to function as a personal AI assistant operating directly on individual hardware.

Functionality and Flexibility

Osaurus distinguishes itself by offering users the ability to connect with a variety of AI models, whether hosted locally or accessed through cloud providers like OpenAI and Anthropic. This flexibility allows users to select AI models that best align with their specific requirements, optimizing performance and functionality.

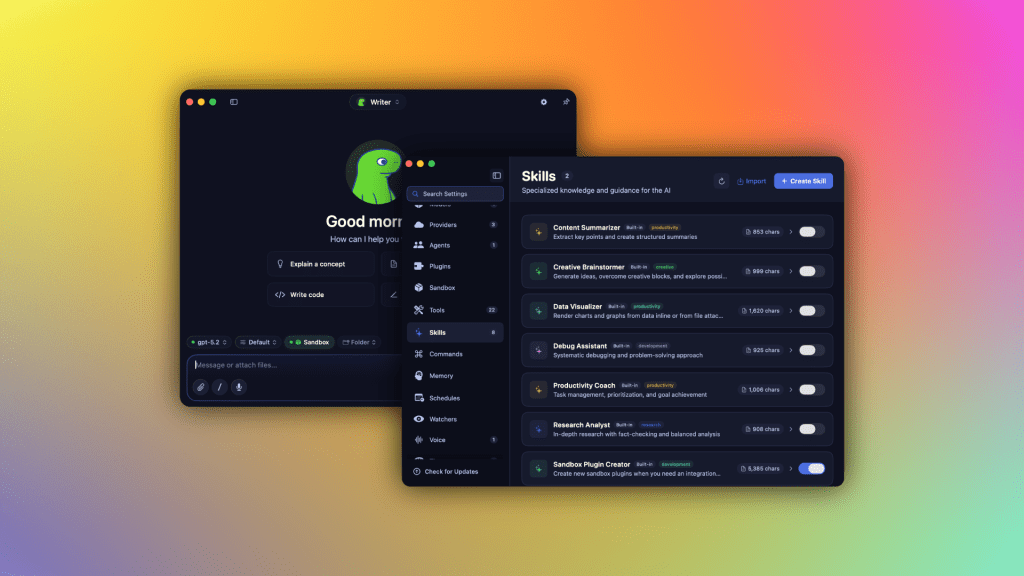

Operating as a harness, Osaurus serves as a control layer that integrates different AI models, tools, and workflows into a unified interface. Unlike developer-centric tools such as OpenClaw or Hermes, which often require technical proficiency and may present security vulnerabilities, Osaurus is designed with user-friendliness and security in mind. It operates within a hardware-isolated, virtual sandbox, ensuring that AI processes are confined to a controlled environment, thereby safeguarding user data and system integrity.

System Requirements and Performance

Implementing AI models locally is inherently resource-intensive. To run local models effectively, Osaurus recommends a minimum of 64GB of RAM, with larger models like DeepSeek v4 necessitating approximately 128GB of RAM. Despite these substantial requirements, Pae is optimistic about the trajectory of local AI development.

He observes a significant improvement in the efficiency of local AI, noting that the intelligence per wattage—a metric assessing the computational efficiency of AI models—has been increasing markedly. Pae highlights the rapid advancements over the past year, where local AI has evolved from struggling with basic sentence completion to executing complex tasks such as tool operation, code writing, browser access, and online shopping.

Supported Models and Integrations

Osaurus supports a diverse array of AI models, including MiniMax M2.5, Gemma 4, Qwen3.6, GPT-OSS, Llama, and DeepSeek V4. It also accommodates Apple’s on-device foundation models and Liquid AI’s LFM family of on-device models. For cloud-based operations, Osaurus integrates with services like OpenAI, Anthropic, Gemini, xAI/Grok, Venice AI, OpenRouter, Ollama, and LM Studio.

As a comprehensive Model Context Protocol (MCP) server, Osaurus enables any MCP-compatible client to access its tools. The platform includes over 20 native plug-ins for applications such as Mail, Calendar, Vision, macOS Use, XLSX, PPTX, Browser, Music, Git, Filesystem, Search, Fetch, and more. Recent updates have introduced voice capabilities, further enhancing its functionality.

Market Reception and Future Prospects

Since its public release nearly a year ago, Osaurus has been downloaded over 112,000 times, indicating a strong market interest. It competes with other tools that facilitate local AI model operation, such as Ollama, Msty, and LM Studio, but differentiates itself through a user-friendly interface and a comprehensive feature set accessible to non-developers.

The founding team, including co-founder Sam Yoo, is currently participating in the New York-based startup accelerator Alliance. They are exploring avenues to extend Osaurus’s offerings to businesses in sectors like legal and healthcare, where local LLMs can address privacy concerns.

Pae envisions a future where the growing capabilities of local AI models could reduce the reliance on cloud-based AI data centers. He suggests that deploying devices like the Mac Studio on-premises could offer substantial power savings while delivering cloud-comparable AI capabilities, thereby diminishing dependence on external data centers.

Conclusion

Osaurus represents a significant advancement in the integration of AI models into personal computing, offering Mac users a versatile and secure platform to harness both local and cloud-based AI capabilities. Its development reflects a broader trend towards empowering individuals and businesses with AI tools that are both powerful and privacy-conscious.