Unveiling the Hidden Dangers of Cross-App Permissions: How Toxic Combinations Threaten Data Security

In the rapidly evolving digital landscape, the integration of applications through AI agents, connectors, and OAuth grants has become commonplace. While these integrations offer enhanced functionality and streamlined workflows, they also introduce complex security challenges. A recent incident involving Moltbook, a social network designed for AI agents, underscores the potential risks associated with cross-app permissions and the formation of toxic combinations.

The Moltbook Incident: A Case Study in Cross-App Vulnerabilities

On January 31, 2026, security researchers disclosed a significant data exposure involving Moltbook. The platform inadvertently left its database accessible, compromising 35,000 email addresses and 1.5 million agent API tokens across 770,000 active agents. More alarmingly, private messages within the database contained plaintext third-party credentials, including OpenAI API keys shared between agents. These sensitive credentials were stored alongside the tokens required to hijack the agents themselves.

This incident exemplifies a toxic combination—a scenario where permissions between multiple applications intersect in unintended and insecure ways. In this case, AI agents acted as intermediaries, holding credentials for both their host platform and external services. Neither platform owner had visibility into this intersection, creating a blind spot that attackers could exploit.

Understanding Toxic Combinations

Toxic combinations arise when an AI agent, integration, or Multi-Channel Platform (MCP) server bridges two or more applications through OAuth grants, API scopes, or tool-use chains. Each application may appear secure in isolation, but the interconnections introduce vulnerabilities that traditional security reviews often overlook.

Consider a developer who installs an MCP connector to enable their Integrated Development Environment (IDE) to post code snippets into a Slack channel. The Slack administrator approves the bot, and the IDE administrator approves the outbound connection. However, neither party evaluates the trust relationship established between the source code and the messaging platform. This oversight can lead to scenarios where prompt injections in the IDE push confidential code into Slack, or instructions embedded in Slack influence the IDE’s operations.

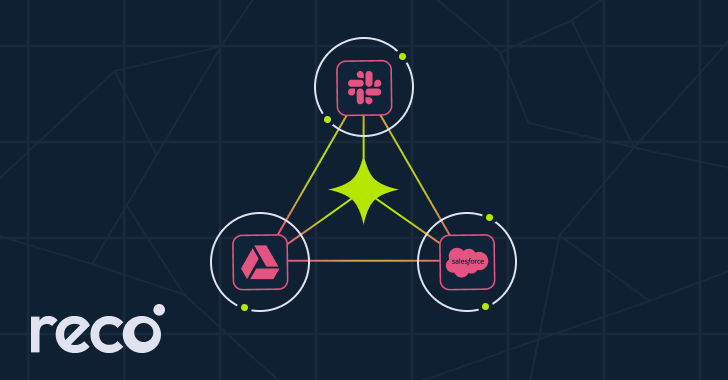

Similar risks emerge when AI agents link cloud storage services like Google Drive with Customer Relationship Management (CRM) platforms such as Salesforce, or when bots connect source code repositories to team communication channels. In each case, the intermediary creates a trust relationship that may not have been explicitly authorized or reviewed.

The Limitations of Single-App Security Reviews

Traditional access reviews focus on individual applications, assessing permissions and security measures within each silo. However, this approach fails to account for the complex web of interactions facilitated by AI agents, MCP servers, and third-party connectors. These entities often operate across multiple applications, establishing trust relationships that are not captured in single-app reviews.

Non-human identities, such as service accounts and bots, now outnumber human users in many Software as a Service (SaaS) environments. These identities often possess extensive permissions across various platforms, creating potential attack vectors that are difficult to monitor and control. The Cloud Security Alliance’s State of SaaS Security 2025 report highlighted that 56% of organizations are concerned about over-privileged API access across their SaaS-to-SaaS integrations.

Strategies for Mitigating Cross-App Security Risks

Addressing the challenges posed by toxic combinations requires a shift from isolated application reviews to a holistic examination of cross-app interactions. Organizations can implement the following strategies to enhance their security posture:

1. Comprehensive Inventory of Non-Human Identities: Maintain a centralized register of all AI agents, bots, MCP servers, and OAuth integrations. Each entry should include an owner and a scheduled review date to ensure ongoing oversight.

2. Assessment of Cross-App Scope Grants: Regularly evaluate permissions granted across applications. Pay particular attention to scenarios where an identity holds write permissions in one application and read permissions in another, as these combinations can introduce unforeseen risks.

3. Implementation of Cross-App Access Reviews: Develop and enforce policies that require periodic reviews of access rights spanning multiple applications. This practice helps identify and remediate toxic combinations before they can be exploited.

4. Enhanced Monitoring and Logging: Deploy monitoring tools capable of tracking activities across integrated applications. Comprehensive logging enables the detection of anomalous behaviors that may indicate security breaches.

5. User Education and Awareness: Educate developers, administrators, and end-users about the potential risks associated with cross-app integrations. Promoting a culture of security awareness can lead to more cautious and informed decisions regarding application permissions.

Conclusion

The integration of applications through AI agents and connectors offers significant benefits but also introduces complex security challenges. The Moltbook incident serves as a stark reminder of the potential dangers posed by toxic combinations resulting from cross-app permissions. By adopting a holistic approach to security that encompasses cross-app interactions, organizations can better protect their data and systems from emerging threats.