Anthropic has unveiled Claude Mythos Preview, an advanced language model with exceptional capabilities in identifying and autonomously exploiting previously unknown zero-day vulnerabilities. To ensure these powerful tools are utilized for defensive purposes, the company has initiated Project Glasswing, collaborating with industry partners to patch critical software systems.

Claude Mythos Preview represents a significant advancement over earlier models like Opus 4.6, which could detect bugs but struggled to develop functional exploits. In internal tests using open-source software, the new model successfully achieved full control-flow hijacking on ten fully patched targets. These sophisticated offensive skills emerged naturally from the model’s overall improvements in logical reasoning and autonomous coding, rather than being explicitly programmed.

Autonomous Exploit Generation

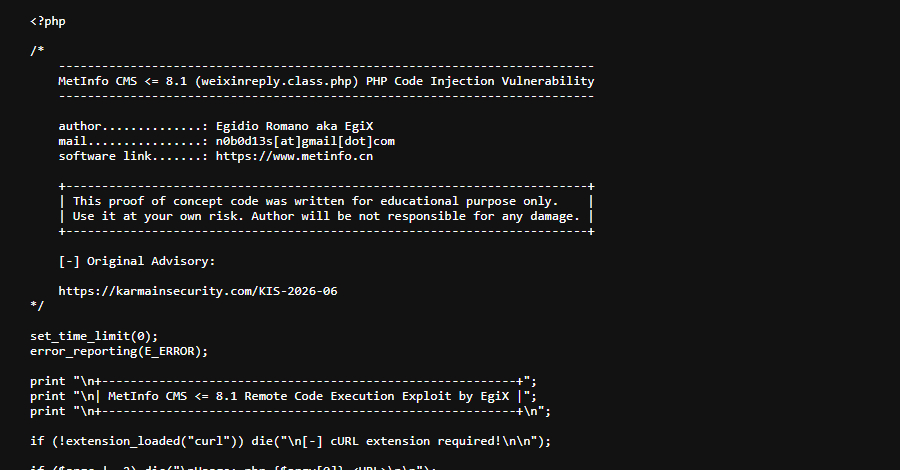

The model can autonomously chain together multiple software flaws to create highly complex attacks that bypass modern security boundaries. For instance, it successfully developed web browser exploits that evaded strict sandboxes and bypassed kernel address space layout randomization (KASLR) to gain elevated privileges.

Due to its high level of automation, even users without formal cybersecurity training have used it to generate fully functional remote code execution exploits overnight. When applied to real-world software, the AI agent discovered critical zero-day bugs that had remained undetected by human researchers for decades.

Notably, it identified a 27-year-old memory corruption vulnerability in OpenBSD, an operating system renowned for its rigorous security standards. Additionally, it found a 16-year-old flaw in the extensively audited FFmpeg media library by analyzing how the software decodes specific video frames.

The OpenBSD vulnerability was caused by a complex signed integer overflow in the network transmission control protocol, which the AI exploited to trigger a system crash. The FFmpeg bug resulted from a mismatch in integer sizes and memory initialization, allowing an attacker to force the program to write out-of-bounds data.

To uncover these flaws, the AI operates within an isolated testing environment where it reads source code, tests hypotheses, and writes proof-of-concept exploits entirely on its own.

Anthropic acknowledges that releasing such a powerful vulnerability-discovery tool could temporarily provide malicious hackers with a dangerous advantage. To mitigate this risk, Project Glasswing restricts initial access to trusted defenders who can use the model to address deep-seated bugs before they are actively exploited in the wild.

Ultimately, security experts believe that as the industry adapts, these advanced AI models will become essential defensive tools, significantly enhancing the global software ecosystem’s security.