Our publication recently received a tip via email from a security professional identifying themselves as “Jack,” raising concerns about two macOS applications currently ranking among the top downloads on Apple’s App Store in the United States. According to the source, these apps—despite being popular and paid—may be engaging in deceptive practices that mislead users and exploit App Store trust mechanisms. Additionally, the same developer appears to be behind both of them, potentially creating unfair competitive practices..

The following report was built by our publication technical team.

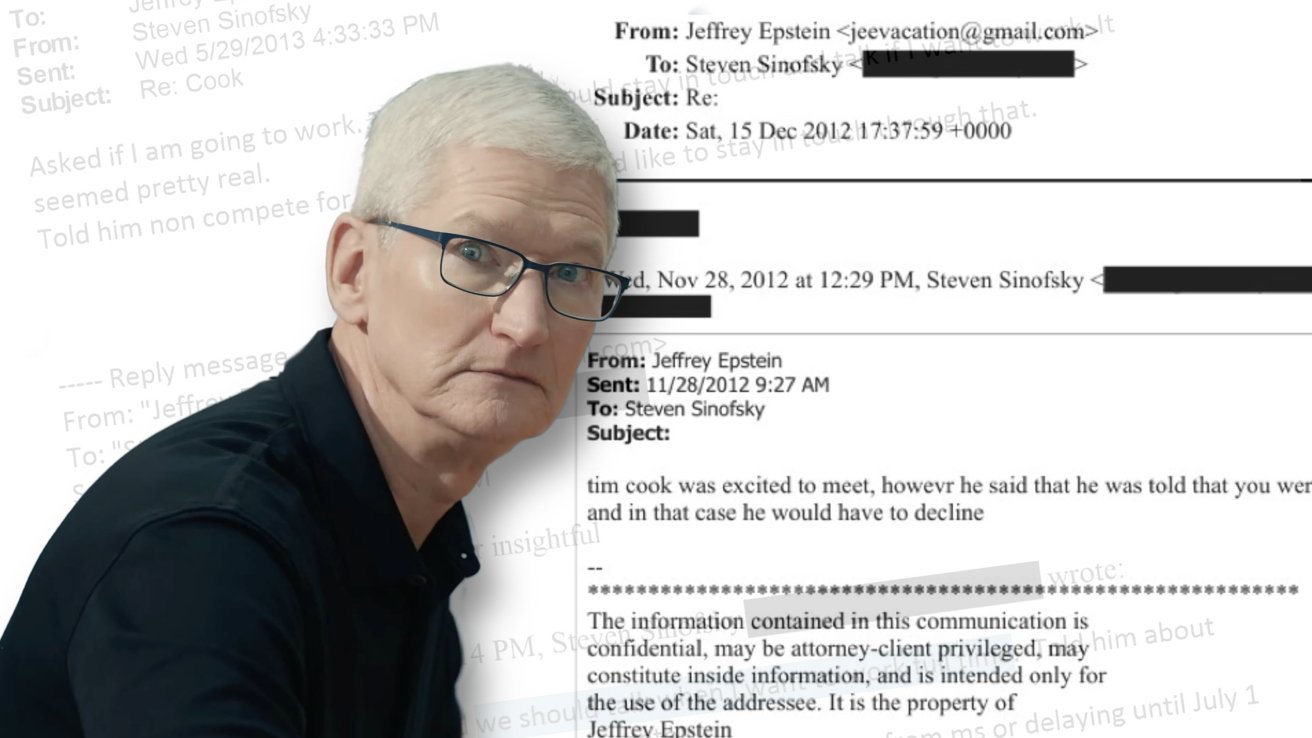

Application 1: ChatbotㆍAsk AI Anything 5.2

https://apps.apple.com/app/ai-chatbot%E3%86%8Dask-ai-anything-5-2/id6753711999

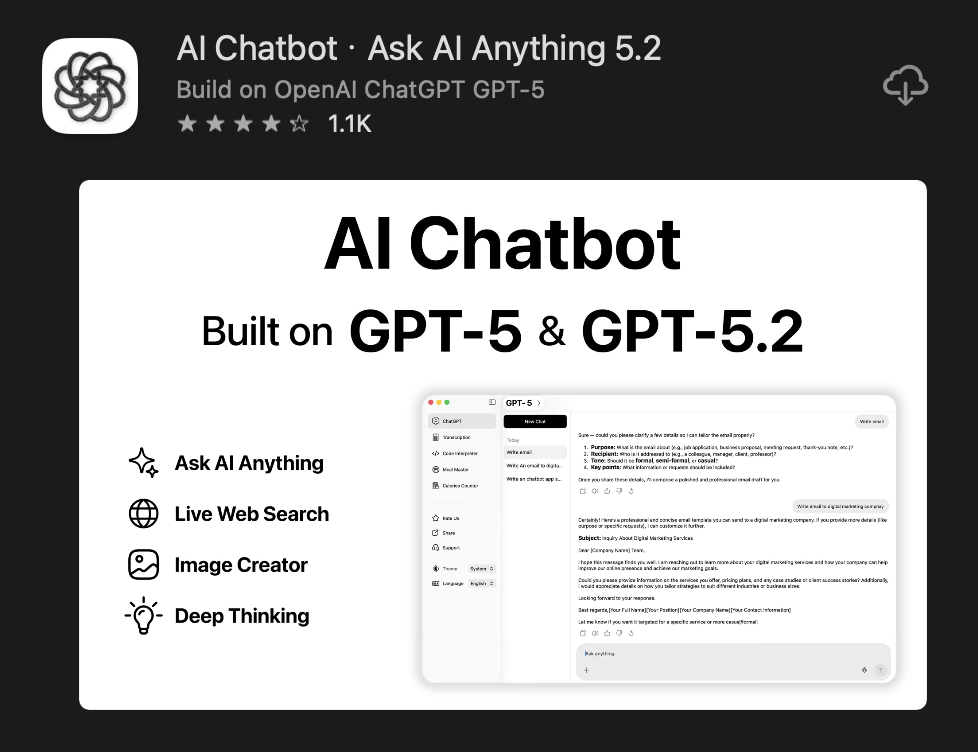

Application 2: AI Chatbot・Ask AI Anything 5.2

https://apps.apple.com/app/ai-chatbot-ask-ai-anything-5-2/id6752956793

Similarities that prove the same developer is behind these two accounts.

– applications use the same name and a near 1-to-1 copy of the OpenAI / ChatGPT icon, deliberately creating a misleading impression and causing user confusion about their legitimacy and affiliation.

– app descriptions use nearly identical wording, structure, and feature lists, including the same claims about “powerful AI assistant,” “real-time web search,” “advanced reasoning,” and support for multiple GPT models. The language follows the same template with only minor rephrasing, along with identical legal links and disclaimers referencing OpenAI. This strong overlap indicates the descriptions were not independently written, but instead copied and slightly modified, pointing to a shared source or coordinated developer network.

– applications use nearly identical privacy policies that follow the same structure, wording, and sequence of clauses, with only minor synonym changes such as “values your privacy” versus “respects your privacy.” – such as HB and HA. The descriptions of data collection, device information, and location tracking are effectively mirrored, indicating they were copied from a single template rather than independently written. In addition, both developers host their policies on the same Google Sites format—Google Sites—using matching layouts, navigation menus, and page design (e.g., hadiqa bashir and hira amin links –

https://sites.google.com/view/hadiqabashir/privacy-policy?authuser=0 and https://sites.google.com/view/hiraamin/privacy-policy?authuser=0), further confirming that these apps are part of a coordinated setup using the same tools, templates, and infrastructure to create the appearance of separate entities while operating under a unified pattern.

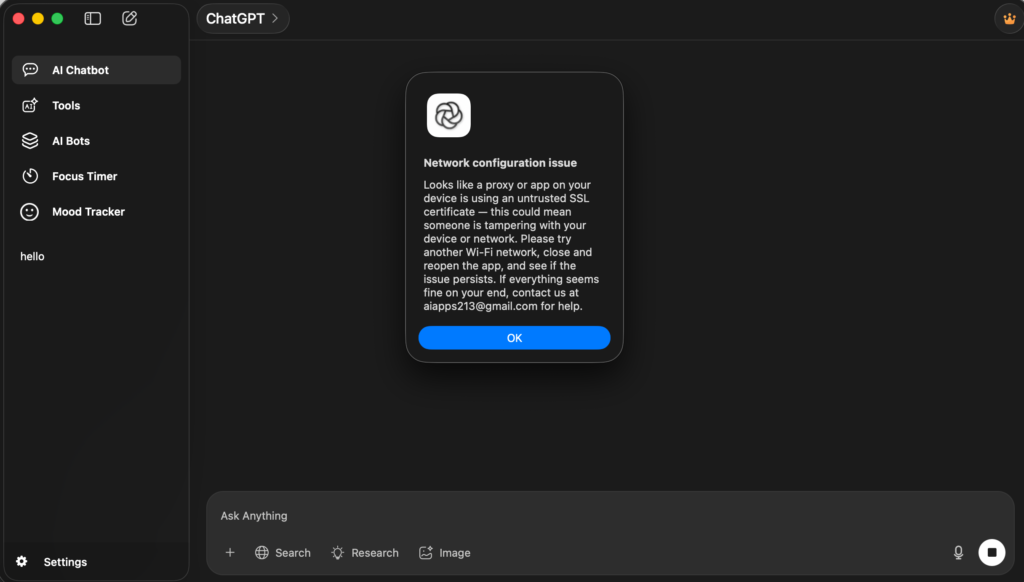

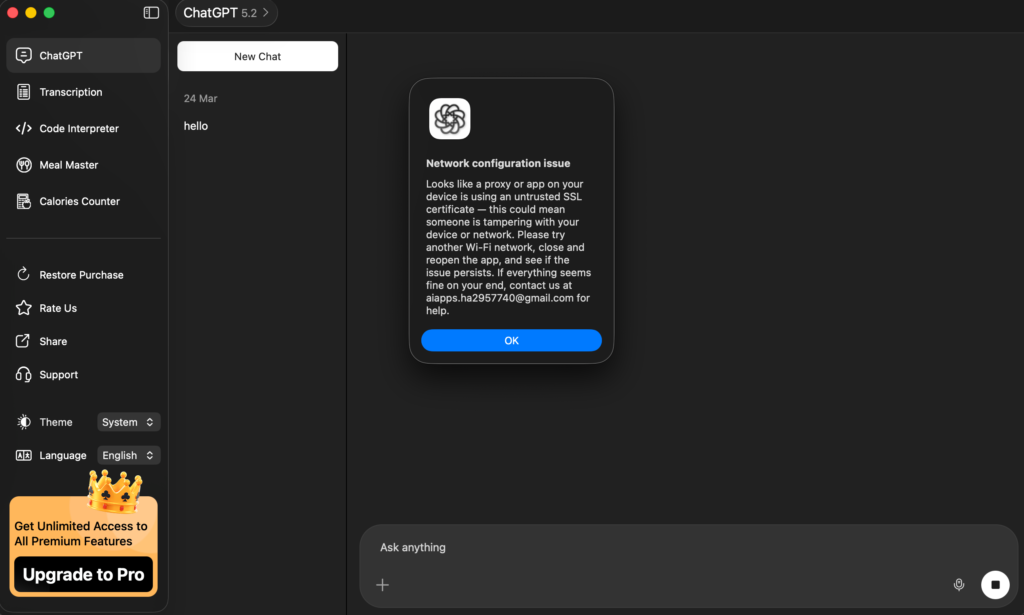

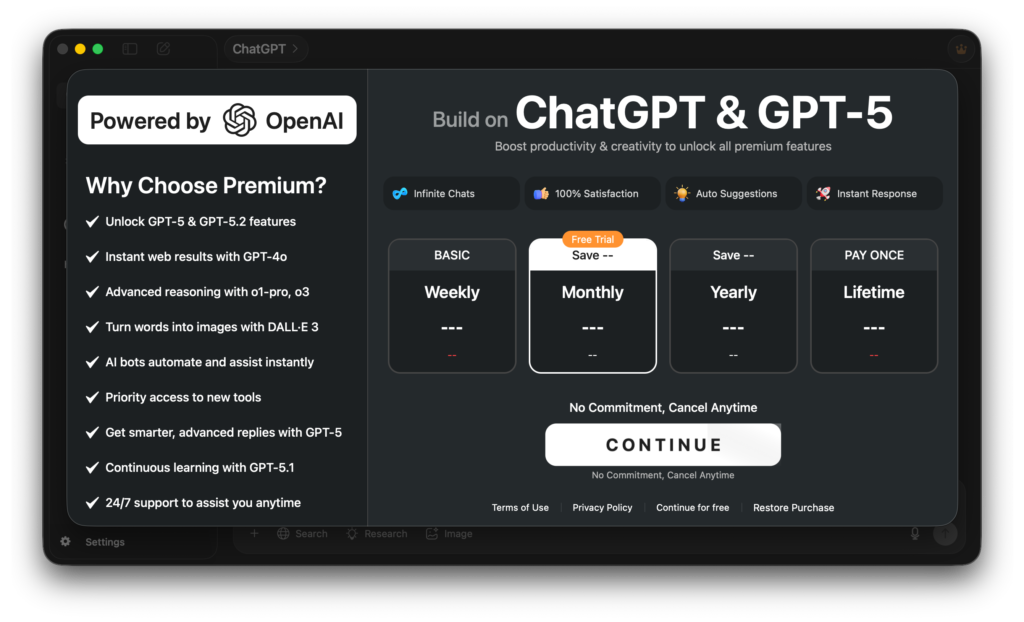

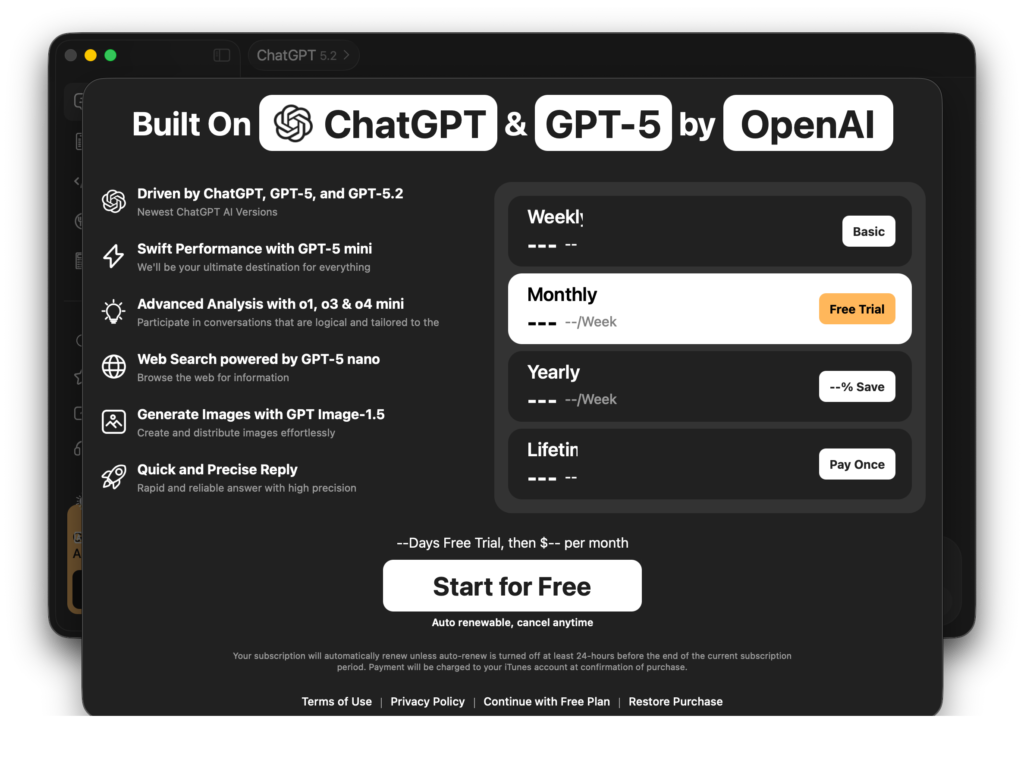

– both applications exhibit identical suspicious behavior in their internal UI, infrastructure, and network handling. They display the same “network configuration issue” error message, using nearly identical wording about “untrusted SSL certificates” and suggesting that the user’s network may be compromised—something that does not match standard macOS system alerts and appears to be hardcoded. When our team analyzed the applications using Charles Proxy, both apps triggered this exact same message, confirming they share identical logic for detecting and blocking proxy traffic. In addition, both rely on generic Gmail addresses for support instead of professional domain-based emails, indicating disposable infrastructure, and their interfaces—including sidebar layout, features, and chat environment—are structurally identical. Furthermore, the paywall screens use the same wording, layout, and subscription options (weekly, monthly, yearly, lifetime), reinforcing that these are cloned applications built from a single template to mislead users and scale subscription-based monetization.

Another critical indicator of deceptive monetization is the presence of unrealistic pricing models.

– the inclusion of a “lifetime” purchase option for a service that relies on ongoing AI infrastructure is a major red flag. Services powered by models from OpenAI require continuous operational costs, including server usage, API calls, and model maintenance, which are inherently subscription-based. Legitimate AI providers price their products accordingly (monthly or usage-based), because each user interaction incurs real, recurring costs. Offering a one-time “lifetime” payment for unlimited access to such services is economically unsustainable at scale and not practiced by legitimate companies. This strongly suggests that the feature is misleading, likely limited, or designed to maximize upfront payments rather than provide a genuinely viable long-term service.

While investigating the defaults of both applications, it was observed that they use Firebase database endpoints following the exact same naming convention, specifically the pattern chatgpt-mac-<unique-id>-default-rtdb.firebaseio.com. This consistent structure—combining “chatgpt-mac” with a randomized suffix and the standard Firebase “default-rtdb” format—indicates a templated backend setup. The only variation is the unique identifier, which is typical when cloning projects, further suggesting that both apps were generated from the same configuration template rather than independently designed systems.

{

SKPurchaseIntentUpdatesLastChecked = “796466996.979499”;

“ff.operationCount” = 1;

“firebase:host:chatgpt-mac-b32ad-default-rtdb.firebaseio.com” = “s-gke-usc1-nssi2-11.firebaseio.com”;

}

{

SKPurchaseIntentUpdatesLastChecked = “796466950.7367899”;

count = 1;

“firebase:host:chatgpt-mac-d24f5-default-rtdb.firebaseio.com” = “s-gke-usc1-nssi3-33.firebaseio.com”;

}

A cross-check with the name “Muhammad Usama Amin,” found in the application’s localization files, further reinforces the regional linkage. The name follows a common South Asian Muslim naming structure, where “Muhammad” is widely used as a first name in Pakistan and other Muslim-majority regions, often combined with names like “Usama” and “Amin,” both of which have strong Islamic and Arabic origins . Additionally, publicly documented individuals with the same name are associated with Pakistan , supporting the likelihood that this developer artifact originates from the same regional context as the other developer names (“Hadiqa Bashir” and “Hira Amin”). The presence of this name embedded in localization files suggests reused development material and further strengthens the conclusion that these applications are part of a coordinated network with shared geographic origin and codebase.

Conclusion

The analysis of both macOS applications reveals overwhelming evidence that they are not independent products, but rather duplicated instances of the same underlying software distributed through multiple developer accounts. Both apps share identical naming structures, nearly indistinguishable icons designed to mimic legitimate AI branding, and highly similar descriptions built from the same template. Their internal behavior further confirms this linkage, including identical UI layouts, paywall wording, fake error messages, and the same proxy-detection logic triggered during network analysis.

Static analysis strengthens this conclusion, showing overlapping strings, shared class structures, identical localization artifacts (including references to “Muhammad Usama Amin” and “FitnessFreak”), and matching backend configurations such as Firebase endpoints following the same naming convention. Additionally, both apps rely on the same infrastructure patterns, including Google Sites-hosted policies and disposable Gmail-based support, indicating a coordinated and reusable deployment setup.

The monetization model—particularly the presence of unrealistic “lifetime” subscriptions for a service dependent on ongoing AI infrastructure—further demonstrates deceptive intent.

This behavior aligns with known patterns of App Store scam operations, where developers deploy multiple cloned apps under different identities to evade detection and maximize revenue. Such practices, including impersonation and subscription traps, have been widely documented in AI-related app scams .