Kali Linux Advances AI-Driven Penetration Testing with Local Integration of Ollama, 5ire, and MCP-Kali-Server

The Kali Linux development team has unveiled a groundbreaking approach to penetration testing by integrating artificial intelligence (AI) directly into local environments. This innovative setup leverages Ollama, 5ire, and MCP-Kali-Server to enable security professionals to conduct AI-assisted penetration tests without relying on external cloud services, thereby enhancing privacy and operational security.

Addressing Privacy Concerns in Penetration Testing

Traditional AI-driven penetration testing tools often depend on cloud-based services, raising significant privacy and security concerns, especially in sensitive environments. Recognizing these challenges, the Kali Linux team has developed a fully self-hosted solution that operates entirely on local hardware. This approach ensures that all data remains within the user’s control, mitigating risks associated with data exposure to third-party services.

Hardware Requirements and Setup

Implementing this local AI-driven penetration testing environment requires specific hardware capabilities. The setup is optimized for systems equipped with NVIDIA GPUs that support CUDA, facilitating efficient local processing of large language models (LLMs). For demonstration purposes, the team utilized an NVIDIA GeForce GTX 1060 with 6 GB of VRAM—a mid-range GPU that balances performance and cost-effectiveness.

To enable CUDA acceleration, users must install NVIDIA’s proprietary drivers, replacing the default open-source Nouveau driver, which lacks the necessary compute support for local LLM inference. After installation and a system reboot, the setup can be verified using the `nvidia-smi` command, confirming the operational status of the driver and CUDA versions.

Ollama: The Local LLM Engine

At the core of this setup is Ollama, a tool designed to simplify the deployment and management of open-weight language models. Ollama serves as the local LLM engine, allowing users to download and run models directly on their hardware. Installation involves extracting the Linux AMD64 tarball and configuring Ollama as a `systemd` service to ensure it runs persistently in the background upon system startup.

The team evaluated several models compatible with Ollama, including `llama3.1:8b` (4.9 GB), `llama3.2:3b` (2.0 GB), and `qwen3:4b` (2.5 GB). These models were selected based on their ability to fit within the 6 GB VRAM constraint of the demonstration hardware. Crucially, the chosen models support tool-calling capabilities, enabling them to invoke external commands through the Model Context Protocol (MCP) layer—a necessary feature for effective AI-driven penetration testing.

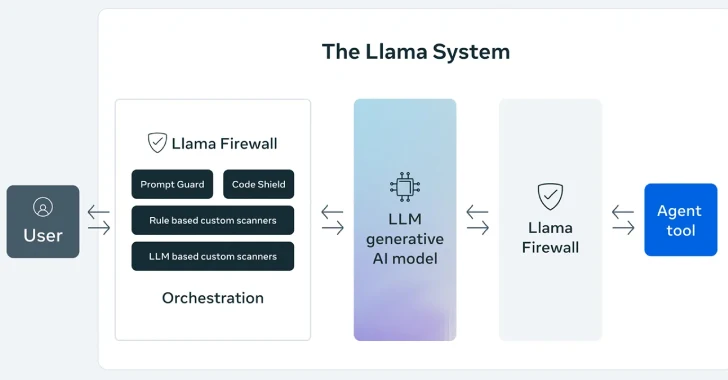

MCP-Kali-Server: Bridging AI and Penetration Testing Tools

The Model Context Protocol (MCP) plays a pivotal role in transforming conversational LLMs into active security tools. The `mcp-kali-server` package, available in Kali’s repositories, acts as a lightweight API bridge, exposing a local Flask server on `127.0.0.1:5000`. Upon initialization, `mcp-kali-server` verifies the presence of essential penetration testing tools such as `nmap`, `gobuster`, `dirb`, and `nikto`. It then connects to the MCP client, presenting the available tools for AI-assisted operations.

This integration supports a range of AI-assisted penetration testing tasks, including web application testing, Capture The Flag (CTF) challenge solving, and interactions with platforms like Hack The Box or TryHackMe. By bridging the gap between AI capabilities and traditional penetration testing tools, MCP-Kali-Server enhances the efficiency and effectiveness of security assessments.

5ire: Connecting Ollama and MCP

Since Ollama does not natively support MCP, an intermediary client bridge is necessary to facilitate communication between the LLM and the penetration testing tools. The Kali Linux team selected 5ire, an open-source AI assistant and MCP client, to fulfill this role. Distributed as a Linux AppImage, 5ire provides a user-friendly interface for managing AI-driven tasks.

Installation of 5ire involves placing the AppImage in the `/opt/5ire/` directory, linking it into the system path, and configuring a desktop entry for easy access. Within 5ire’s graphical user interface, users can enable Ollama as the LLM provider, toggle tool support for each model, and register `mcp-kali-server` as a local tool using the command `/usr/bin/mcp-server`. This configuration ensures seamless integration between the AI engine and the penetration testing tools.

Validating the Setup: Natural Language Nmap Execution

To validate the functionality of this local AI-driven penetration testing environment, the team conducted a test using a natural language prompt. They instructed 5ire, backed by the `qwen3:4b` model, to perform a TCP port scan of `scanme.nmap.org` across ports 80, 443, 21, and 22.

The LLM accurately interpreted the natural language request, invoked `nmap` through the MCP chain, and returned structured results—all processed entirely offline. The `ollama ps` command confirmed that 100% of the processing was handled by the GPU, demonstrating the efficiency and effectiveness of the local setup.

Implications for Privacy-Preserving Penetration Testing

This development represents a significant advancement in privacy-preserving penetration testing methodologies. By eliminating the need for cloud-based AI services, security professionals can conduct comprehensive assessments without exposing sensitive data to external entities. The combination of Ollama, 5ire, and MCP-Kali-Server provides a robust, self-contained environment for AI-assisted penetration testing, aligning with the stringent privacy and security requirements of modern organizations.

Moreover, this approach offers flexibility and scalability, allowing users to select and configure models based on their specific hardware capabilities and testing needs. As AI continues to play an increasingly prominent role in cybersecurity, the integration of such tools into local environments ensures that organizations can leverage advanced technologies while maintaining control over their data and operations.

Conclusion

The integration of Ollama, 5ire, and MCP-Kali-Server into Kali Linux marks a transformative step in the field of penetration testing. By enabling AI-driven assessments to be conducted entirely on local hardware, this setup addresses critical privacy concerns and enhances the efficiency of security evaluations. As the cybersecurity landscape evolves, such innovations will be instrumental in equipping professionals with the tools necessary to identify and mitigate vulnerabilities effectively.