Critical ‘ClawJacked’ Vulnerability Exposes OpenClaw AI Agents to Remote Hijacking

OpenClaw, a prominent artificial intelligence (AI) platform, recently addressed a critical security vulnerability that could have permitted malicious websites to gain unauthorized control over locally running AI agents. This flaw, identified by cybersecurity firm Oasis Security and dubbed ClawJacked, resided within the core system of OpenClaw, affecting the gateway component—a local WebSocket server bound to localhost and secured by a password.

Understanding the ClawJacked Vulnerability

The ClawJacked vulnerability exploited the inherent trust mechanisms of the OpenClaw gateway. Typically, when a new device attempts to connect, user confirmation is required to establish trust. However, the gateway automatically approved device registrations from localhost connections without user intervention. This oversight allowed attackers to exploit the system by following a specific sequence:

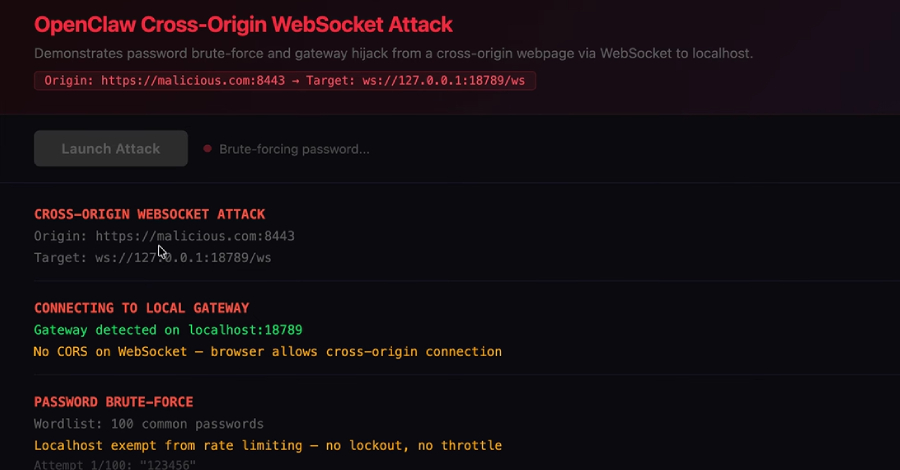

1. Initiating a WebSocket Connection: An attacker-controlled website could execute malicious JavaScript to open a WebSocket connection to the OpenClaw gateway on the local machine.

2. Brute-Forcing the Gateway Password: Due to the absence of rate-limiting mechanisms, the script could systematically attempt various passwords until successful authentication was achieved.

3. Registering as a Trusted Device: Upon gaining administrative access, the script could register itself as a trusted device. The gateway’s automatic approval of localhost connections facilitated this step without alerting the user.

4. Gaining Full Control: With trusted status, the attacker could interact with the AI agent, access configuration data, enumerate connected nodes, and read application logs, effectively seizing control over the AI agent.

Oasis Security highlighted the gravity of this issue, stating, Any website you visit can open one to your localhost. Unlike regular HTTP requests, the browser doesn’t block these cross-origin connections. So while you’re browsing any website, JavaScript running on that page can silently open a connection to your local OpenClaw gateway. The user sees nothing.

OpenClaw’s Swift Response and Mitigation Measures

Upon responsible disclosure of the vulnerability, OpenClaw acted promptly, releasing version 2026.2.25 on February 26, 2026, to address the issue. Users are strongly advised to update to this latest version immediately. Additionally, it is recommended to:

– Audit Access Permissions: Regularly review and manage access granted to AI agents to ensure only authorized entities have control.

– Implement Governance Controls: Establish robust governance protocols for non-human (agentic) identities to prevent unauthorized access and actions.

Broader Security Implications in the OpenClaw Ecosystem

The ClawJacked vulnerability underscores the need for heightened security measures within the OpenClaw ecosystem. AI agents often possess extensive access to various systems and can execute tasks across enterprise tools. If compromised, they can significantly amplify the potential damage.

Reports from Bitsight and NeuralTrust have highlighted that OpenClaw instances connected to the internet expand the attack surface. Each integrated service increases the potential impact, and attackers can exploit prompt injections in content processed by the agent, such as emails or Slack messages, to execute malicious actions.

Additional Vulnerabilities and Ongoing Security Enhancements

In addition to ClawJacked, OpenClaw addressed a log poisoning vulnerability that allowed attackers to write malicious content to log files via WebSocket requests to publicly accessible instances on TCP port 18789. Since the agent reads its own logs to troubleshoot tasks, this loophole could be exploited to embed indirect prompt injections, leading to unintended consequences. This issue was resolved in version 2026.2.13, released on February 14, 2026.

Furthermore, OpenClaw has been found susceptible to multiple vulnerabilities, including remote code execution, command injection, server-side request forgery (SSRF), authentication bypass, and path traversal. These vulnerabilities have been addressed in various versions, including 2026.1.20, 2026.1.29, 2026.2.1, 2026.2.2, and 2026.2.14.

Emerging Threats in the OpenClaw Ecosystem

Recent research has revealed that malicious skills uploaded to ClawHub, an open marketplace for downloading OpenClaw skills, are being used to deliver a new variant of Atomic Stealer, a macOS information stealer. The infection chain begins with a seemingly benign SKILL.md file that installs a prerequisite. OpenClaw then fetches the installation instructions from a website, which includes a malicious command to download and execute the stealer payload.

Additionally, a new malware delivery campaign has been identified, where a threat actor leaves comments on legitimate skill listing pages, urging users to run a command in the Terminal app if the skill doesn’t work on macOS. This command retrieves Atomic Stealer from a known malicious IP address.

An analysis of 3,505 ClawHub skills by AI security company Straiker uncovered 71 malicious ones, some posing as legitimate cryptocurrency tools but containing hidden functionality to redirect funds to attacker-controlled wallets. Two other skills, bob-p2p-beta and runware, have been linked to a multi-layered cryptocurrency scam targeting the AI agent ecosystem.

Recommendations for Users and Organizations

Given the evolving threat landscape, users and organizations are advised to:

– Audit Skills Before Installation: Thoroughly review skills before integrating them into the OpenClaw environment.

– Limit Credential Sharing: Avoid providing credentials and keys unless absolutely necessary.

– Monitor Skill Behavior: Continuously observe the behavior of installed skills for any signs of malicious activity.

Microsoft has also issued an advisory, warning that unguarded deployment of self-hosted agent runtimes like OpenClaw could lead to credential exposure, memory modification, and host compromise if the agent is tricked into retrieving and running malicious code through poisoned skills or prompt injections.

As AI agent frameworks become more prevalent in enterprise environments, it is imperative to treat them as untrusted code execution with persistent credentials. Deployments should be isolated, using dedicated, non-privileged credentials, and access should be limited to non-sensitive data. Continuous monitoring and a rebuild plan should be integral to the operating model.