Guide Labs Unveils Steerling-8B: A Breakthrough in Interpretable Large Language Models

In the rapidly evolving field of artificial intelligence, understanding the decision-making processes of deep learning models remains a formidable challenge. Complex behaviors such as unexpected biases, hallucinations, and unpredictable outputs often arise from the opaque nature of neural networks with billions of parameters. Addressing this issue head-on, San Francisco-based startup Guide Labs has introduced Steerling-8B, an 8-billion-parameter large language model (LLM) designed with a novel architecture that emphasizes interpretability.

The Genesis of Steerling-8B

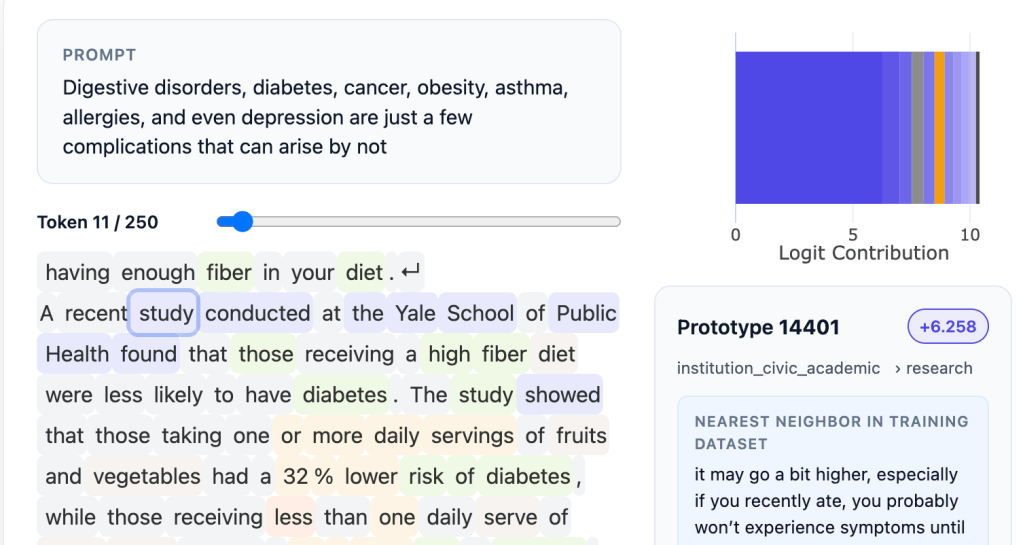

Founded by CEO Julius Adebayo and Chief Science Officer Aya Abdelsalam Ismail, Guide Labs aims to demystify the inner workings of LLMs. Steerling-8B stands out by allowing each token it generates to be traced back to its origins in the training data. This traceability enables users to identify the specific reference materials for facts cited by the model and to comprehend how the model processes complex concepts like humor or gender.

Adebayo, who initiated this work during his PhD at MIT, co-authored a pivotal 2018 paper highlighting the unreliability of existing methods for understanding deep learning models. This research paved the way for a new approach to building LLMs. By integrating a concept layer into the model, data is categorized into traceable segments. Although this method requires extensive initial data annotation, leveraging other AI models has facilitated the training of Steerling-8B as a substantial proof of concept.

Engineering for Interpretability

Traditional interpretability efforts often resemble neuroscience on a model, attempting to reverse-engineer understanding from complex systems. Guide Labs flips this paradigm by engineering the model from the ground up to be inherently interpretable. This proactive design eliminates the need for post hoc analysis, providing clarity on the model’s decision-making processes.

Adebayo illustrates the significance of this approach: If I have a trillion ways to encode gender, and I encode it in 1 billion of the 1 trillion things that I have, you have to make sure you find all those 1 billion things that I’ve encoded, and then you have to be able to reliably turn that on, turn them off. You can do it with current models, but it’s very fragile… It’s sort of one of the holy grail questions.

Balancing Interpretability and Emergent Behaviors

A common concern with highly interpretable models is the potential suppression of emergent behaviors—the model’s ability to generalize and generate novel insights beyond its training data. However, Steerling-8B maintains this capability. The Guide Labs team monitors discovered concepts that the model identifies independently, such as quantum computing, ensuring that interpretability does not come at the expense of innovation.

Implications Across Industries

The implications of an interpretable LLM like Steerling-8B are vast and varied:

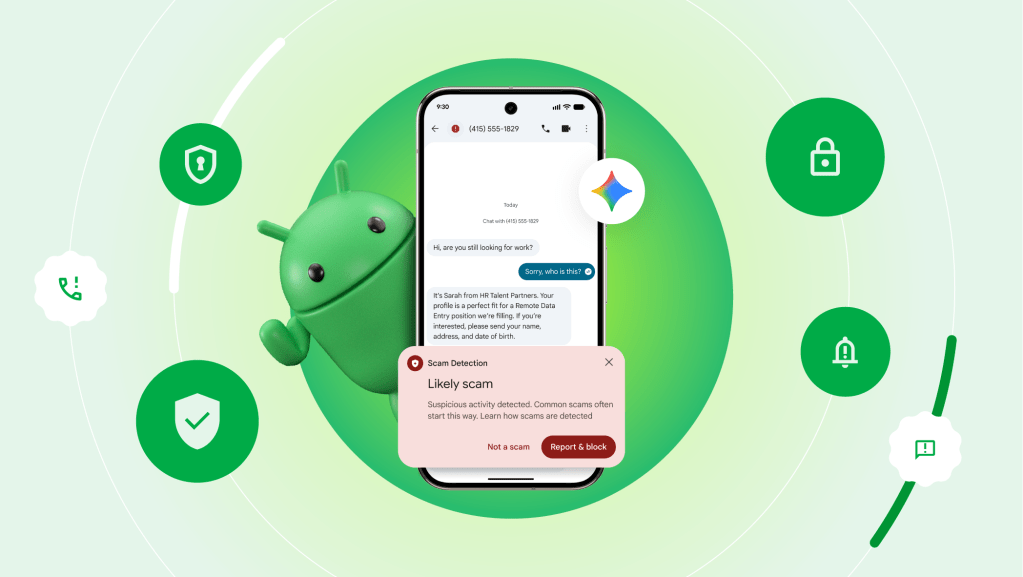

– Consumer Applications: For consumer-facing LLMs, this architecture allows developers to enforce content guidelines more effectively, such as blocking the use of copyrighted materials or controlling outputs related to sensitive topics like violence or drug abuse.

– Regulated Industries: In sectors like finance, where models evaluate loan applicants, it’s crucial to ensure that decisions are based on financial records without unintended biases related to race or other protected characteristics. An interpretable model provides the transparency needed to meet regulatory standards.

– Scientific Research: In scientific domains, particularly in areas like protein folding, deep learning models have achieved significant successes. However, researchers require insight into the reasoning behind the model’s predictions to validate and build upon these findings. Steerling-8B offers the clarity needed for such endeavors.

Performance and Future Prospects

Guide Labs reports that Steerling-8B achieves 90% of the capability of existing models while utilizing less training data, thanks to its innovative architecture. This efficiency demonstrates that training interpretable models has transitioned from a scientific challenge to an engineering problem. Adebayo emphasizes, We figured out the science and we can scale them, and there is no reason why this kind of model wouldn’t match the performance of the frontier level models, which often have many more parameters.

Looking ahead, Guide Labs plans to develop a larger model and offer API and agentic access to users. Emerging from Y Combinator and securing a $9 million seed round from Initialized Capital in November 2024, the company is well-positioned to advance its mission of making AI systems more transparent and trustworthy.

The Broader Context

The release of Steerling-8B aligns with a growing industry focus on the interpretability of AI models. For instance, OpenAI has been developing tools to automatically identify which parts of an LLM are responsible for specific behaviors, aiming to enhance trust in AI systems. Similarly, startups like Patronus AI are creating evaluation tools for LLMs, particularly for regulated industries where accuracy and transparency are paramount.

Conclusion

As AI systems become increasingly integrated into various aspects of society, the need for transparency and interpretability becomes more pressing. Guide Labs’ Steerling-8B represents a significant step toward demystifying the decision-making processes of large language models. By providing clear insights into how and why these models generate specific outputs, Steerling-8B paves the way for more responsible and trustworthy AI applications across diverse industries.