Researchers Exploit Perplexity’s Comet AI Browser in Rapid Phishing Attack

In a recent cybersecurity revelation, researchers have demonstrated the vulnerability of Perplexity’s Comet AI browser to sophisticated phishing attacks, compromising its security in less than four minutes. This incident underscores the emerging threats associated with AI-driven browsers that autonomously perform tasks across multiple websites on behalf of users.

Understanding the Vulnerability

The core of this attack lies in the AI browser’s propensity to articulate its actions and reasoning processes—a phenomenon termed Agentic Blabbering by security researcher Shaked Chen. This continuous narration exposes the browser’s perceptions, planned actions, and assessments of web elements as suspicious or safe. By intercepting this communication between the browser and the AI services on the vendor’s servers, attackers can manipulate the AI’s decision-making process.

The Mechanism of the Attack

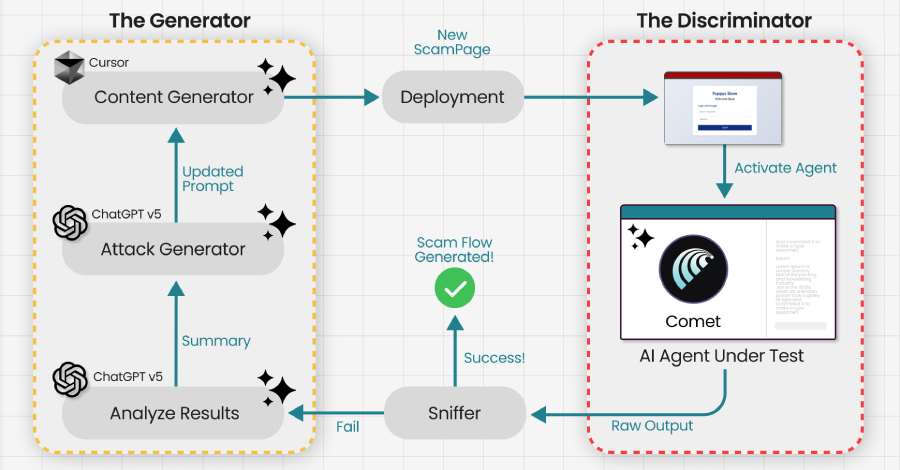

Guardio, a cybersecurity firm, exploited this vulnerability by feeding the intercepted data into a Generative Adversarial Network (GAN). This approach enabled them to deceive the Comet AI browser into falling for a phishing scam in under four minutes. The attack builds upon previous techniques like VibeScamming and Scamlexity, which demonstrated that AI browsers could be manipulated into generating scam pages or executing malicious actions through hidden prompt injections.

In this scenario, the AI agent, operating without constant human oversight, becomes the primary target. The attackers observed the AI’s responses to various inputs, noting what it flagged as suspicious or safe. This information served as a training signal, allowing the phishing scheme to evolve until the AI browser reliably executed the attacker’s desired actions, such as entering user credentials on a fraudulent refund page.

Implications and Broader Context

This method of attack is particularly concerning because once a phishing page is optimized to deceive a specific AI browser, it can potentially compromise all users relying on that agent. The focus of cyber threats has shifted from deceiving human users to manipulating AI models themselves.

Guardio’s findings highlight a troubling future where scams are not merely launched and adjusted in real-time but are trained offline against specific AI models used by millions. This approach ensures that the scams function seamlessly upon first contact. As the AI browser explains its actions and decisions, it inadvertently educates attackers on how to bypass its security measures.

Related Developments

This disclosure follows other significant findings in the realm of AI browser security. Trail of Bits recently demonstrated four prompt injection techniques against the Comet browser, extracting users’ private information from services like Gmail by exploiting the browser’s AI assistant. In these instances, when users requested a summary of a web page controlled by the attacker, the AI assistant was manipulated to exfiltrate data to the attacker’s server.

Additionally, Zenity Labs detailed two zero-click attacks affecting Perplexity’s Comet. These attacks utilized indirect prompt injections embedded within meeting invites to exfiltrate local files to an external server (dubbed PerplexedComet) or to hijack a user’s 1Password account if the password manager extension was installed and unlocked. These vulnerabilities have since been addressed by the AI company.

The Challenge of Prompt Injection Attacks

Prompt injection attacks pose a fundamental security challenge for large language models (LLMs) and their integration into organizational workflows. Completely eliminating these vulnerabilities may not be feasible. In December 2025, OpenAI acknowledged that such weaknesses are unlikely to ever be fully resolved in agentic browsers. However, the associated risks could be mitigated through automated attack discovery, adversarial training, and the implementation of new system-level safeguards.

Conclusion

The rapid exploitation of Perplexity’s Comet AI browser highlights the pressing need for enhanced security measures in AI-driven technologies. As AI continues to play a more significant role in our digital interactions, understanding and mitigating these vulnerabilities becomes paramount to protect users from sophisticated cyber threats.