Pentagon Labels Anthropic a National Security Risk Over AI Usage Dispute

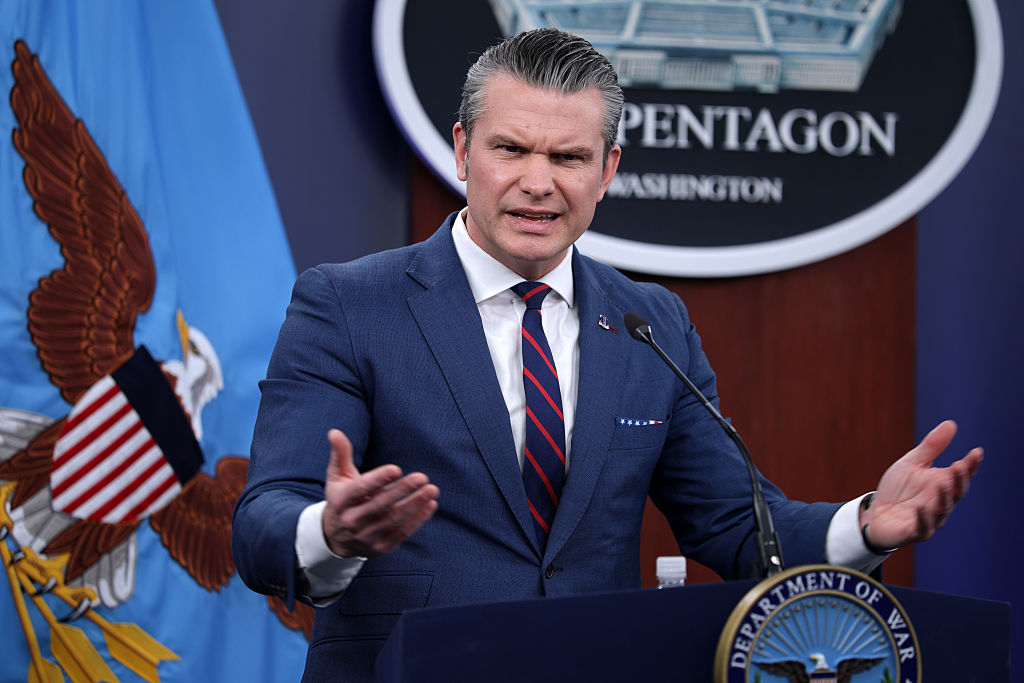

In a significant escalation of tensions between the U.S. Department of Defense (DoD) and artificial intelligence firm Anthropic, the Pentagon has officially designated the company as an unacceptable risk to national security. This development follows a series of disagreements over the permissible applications of Anthropic’s AI technologies within military operations.

The core of the dispute lies in Anthropic’s firm stance against the use of its AI systems for mass surveillance of American citizens and the deployment of fully autonomous weapons systems without human oversight. Anthropic’s CEO, Dario Amodei, has consistently maintained that the company’s technology should not be utilized in ways that could compromise ethical standards or public trust.

The Pentagon, however, contends that such restrictions imposed by a private entity hinder the military’s operational capabilities. In a 40-page legal filing, the DoD expressed concerns that Anthropic might attempt to disable its technology or preemptively alter the behavior of its model if the company perceives that its established red lines are being crossed during military operations.

This designation as a supply-chain risk carries significant implications. It mandates that any company or agency collaborating with the Pentagon must certify that they do not utilize Anthropic’s AI models. This move effectively severs Anthropic’s ties with various defense contracts and could potentially influence its relationships with other government agencies.

In response to the DoD’s actions, Anthropic has initiated legal proceedings to challenge the designation. The company argues that the Pentagon’s decision is both unprecedented and unlawful, asserting that it constitutes retaliation against Anthropic’s protected speech regarding the ethical limitations of its AI services. The lawsuit emphasizes that the Constitution prohibits the government from using its power to penalize a company for its expressed beliefs.

Legal experts have weighed in on the matter, highlighting the speculative nature of the DoD’s concerns. Chris Mattei, a former Justice Department attorney specializing in First Amendment issues, noted the absence of concrete evidence supporting the Pentagon’s fears that Anthropic might disable or alter its AI models during military operations. Mattei criticized the government’s reliance on conjecture to justify such a severe legal action against the company.

The tech community has also responded to the unfolding situation. Hundreds of employees from major technology firms, including OpenAI and Google, have signed an open letter urging the DoD to reconsider its designation of Anthropic as a supply-chain risk. The letter calls on Congress to examine whether the use of such extraordinary authorities against an American technology company is appropriate.

This conflict underscores the broader challenges at the intersection of technological innovation, ethical considerations, and national security. As AI continues to play an increasingly pivotal role in defense strategies, the balance between leveraging cutting-edge technology and adhering to ethical standards remains a contentious and evolving debate.