Google API Keys’ Silent Access to Gemini AI Poses Security Risks

A significant security vulnerability has been identified within Google Cloud’s API keys, particularly concerning their interaction with the Gemini AI endpoints. This flaw allows legacy public-facing API keys to inadvertently grant unauthorized access to sensitive data and services associated with Google’s Gemini AI, potentially exposing private files, cached data, and leading to unexpected financial charges.

Background on API Key Usage

For over a decade, Google has advised developers to embed API keys, formatted as `AIza…` strings, directly into client-side HTML and JavaScript. Official documentation from Firebase and Google Maps indicated that these keys were intended as project identifiers for billing purposes, not as authentication credentials. Consequently, developers were led to believe that exposing these keys publicly posed minimal risk.

Emergence of the Vulnerability

The introduction of the Gemini API (Generative Language API) has altered the security landscape. When this API is enabled within a Google Cloud project, all existing API keys associated with that project automatically inherit access to Gemini’s sensitive endpoints. This transition occurs without any notifications or warnings to the developers, leaving them unaware of the expanded privileges granted to their previously benign API keys.

Technical Analysis

Researchers at Truffle Security have highlighted that this issue represents a privilege escalation rather than a mere misconfiguration. The sequence of events is crucial: a developer, adhering to Google’s guidelines, embeds a Maps API key in public JavaScript. Subsequently, another team member activates the Gemini API within the same cloud project. As a result, the publicly exposed key gains access to sensitive Gemini endpoints without the original developer’s knowledge.

This vulnerability is rooted in two recognized weaknesses:

1. CWE-1188 (Insecure Default Initialization): New API keys in Google Cloud are set to Unrestricted by default, allowing access to all enabled APIs within the project, including Gemini.

2. CWE-269 (Incorrect Privilege Assignment): The default settings inadvertently assign higher privileges to API keys than intended, leading to unauthorized access.

Potential Exploitation Scenarios

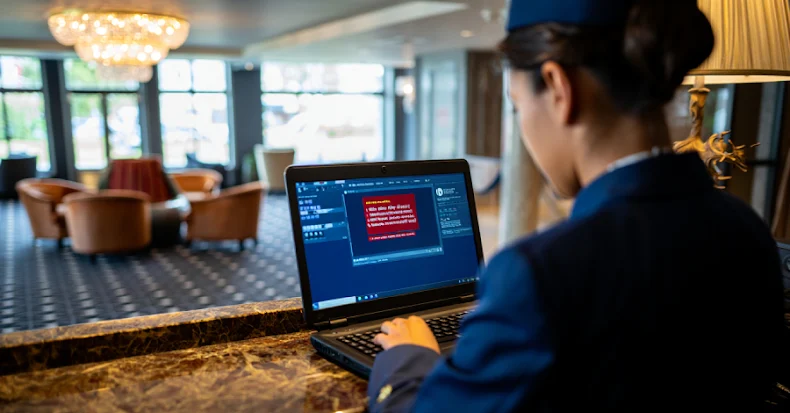

Exploiting this vulnerability requires minimal effort from attackers. By visiting a public website, they can extract the `AIza…` key from the page source and directly query the Gemini API. A successful response grants access to:

– Private Files and Cached Data: Endpoints like `/files/` and `/cachedContents/` can reveal uploaded datasets, documents, and stored AI context.

– Financial Implications: Attackers can deplete Gemini API quotas or incur substantial charges on the victim’s billing account.

– Service Disruption: Exhausting quotas can render legitimate Gemini-powered services inoperative.

Truffle Security’s analysis of the November 2025 Common Crawl dataset, encompassing approximately 700 TiB of publicly scraped web content, identified 2,863 active Google API keys susceptible to this vulnerability. Affected entities include major financial institutions, security firms, global recruiting companies, and even Google itself. Notably, a key embedded on a Google product website since February 2023, predating Gemini’s existence, had silently acquired full access to Gemini’s model endpoints.

Google’s Response and Mitigation Measures

In response to these findings, Google has outlined a remediation plan that includes:

– Scoped Defaults for AI Studio Keys: New API keys will have Gemini-only access by default.

– Automated Blocking: Leaked keys discovered in the wild will be automatically blocked.

– Proactive Notifications: Developers will receive alerts when exposed keys are identified.

However, as of the disclosure date, the root-cause fix was still in progress, with no confirmation of a complete architectural remedy.

Recommended Actions for Developers

Organizations utilizing Google Cloud services should take immediate steps to mitigate potential risks:

1. Audit All GCP Projects: Navigate to APIs & Services > Enabled APIs to check for the Generative Language API across all projects.

2. Inspect API Key Configurations: Identify any unrestricted keys or those explicitly permitting access to the Generative Language API.

3. Verify Public Exposure: Search client-side JavaScript, public repositories, and CI/CD pipelines for any exposed `AIza…` strings.

4. Rotate Exposed Keys Immediately: Prioritize older keys deployed under the previous guidance that considered them safe to share.

5. Utilize Security Tools: Employ tools like TruffleHog to scan codebases for live, verified Gemini-accessible keys.

Broader Implications

This incident underscores a broader pattern where public identifiers can inadvertently gain sensitive AI privileges. As AI capabilities are integrated into existing platforms, the attack surface for legacy credentials expands in unforeseen ways. Developers and organizations must remain vigilant, regularly auditing and updating their security practices to adapt to evolving technological landscapes.