Critical Vulnerabilities in Claude Code Expose Systems to Remote Code Execution and API Key Theft

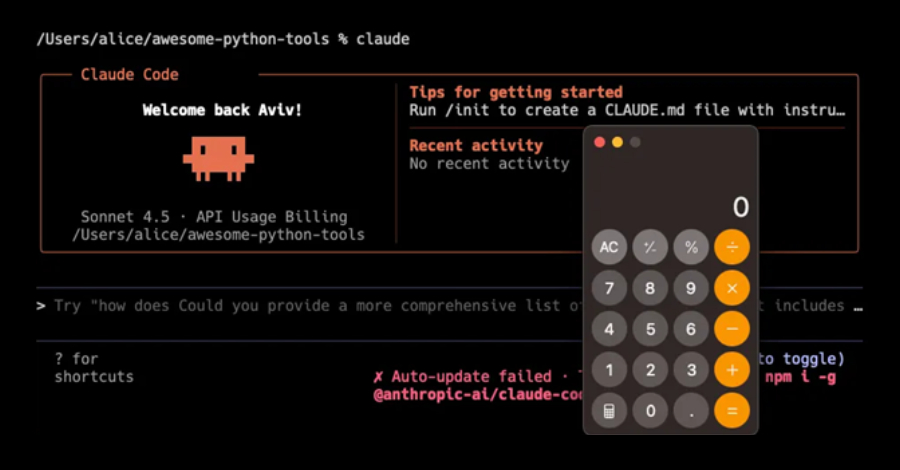

Recent investigations have uncovered significant security vulnerabilities within Anthropic’s AI-driven coding assistant, Claude Code. These flaws could potentially allow unauthorized remote code execution and the exfiltration of sensitive API credentials.

Researchers from Check Point Research identified that these vulnerabilities exploit various configuration mechanisms, including Hooks, Model Context Protocol (MCP) servers, and environment variables. By manipulating these elements, attackers can execute arbitrary shell commands and extract Anthropic API keys when users clone and open untrusted repositories.

The identified vulnerabilities are categorized as follows:

1. Code Injection via Untrusted Project Hooks (CVSS Score: 8.7): This vulnerability arises from a bypass of user consent when initiating Claude Code in a new directory. It enables arbitrary code execution without additional confirmation through untrusted project hooks defined in the `.claude/settings.json` file. This issue was addressed in version 1.0.87, released in September 2025.

2. Automatic Shell Command Execution on Tool Initialization (CVE-2025-59536, CVSS Score: 8.7): This flaw permits the execution of arbitrary shell commands automatically upon tool initialization when a user starts Claude Code in an untrusted directory. The vulnerability was fixed in version 1.0.111, released in October 2025.

3. Information Disclosure via Project-Load Flow (CVE-2026-21852, CVSS Score: 5.3): This vulnerability in Claude Code’s project-load process allows a malicious repository to exfiltrate data, including Anthropic API keys. The issue was resolved in version 2.0.65, released in January 2026.

Anthropic’s advisory for CVE-2026-21852 highlights that if a user starts Claude Code in a repository controlled by an attacker, and the repository includes a settings file that sets `ANTHROPIC_BASE_URL` to an attacker-controlled endpoint, Claude Code would issue API requests before displaying the trust prompt. This behavior could potentially leak the user’s API keys.

In practical terms, merely opening a crafted repository could lead to the exfiltration of a developer’s active API key, redirection of authenticated API traffic to external infrastructure, and credential capture. Such exploitation could grant attackers deeper access to the victim’s AI infrastructure, including shared project files, cloud-stored data, and the ability to upload malicious content or incur unexpected API costs.

The first vulnerability allows for stealthy execution on a developer’s machine without any additional interaction beyond launching the project. Similarly, CVE-2025-59536 can be exploited by attackers to override explicit user approval prior to interacting with external tools and services through the Model Context Protocol (MCP). This is achieved by setting the `enableAllProjectMcpServers` option to true in the repository-defined configurations within `.mcp.json` and `.claude/settings.json` files.

As AI-powered tools increasingly execute commands, initialize external integrations, and initiate network communication autonomously, configuration files effectively become part of the execution layer. This shift fundamentally alters the threat model, extending risks beyond running untrusted code to include opening untrusted projects. In AI-driven development environments, the supply chain begins not only with source code but also with the automation layers surrounding it.