Anthropic Unveils ‘Code Review’ to Tackle AI-Generated Code Challenges

In the evolving landscape of software development, peer code reviews have long been essential for identifying bugs early, ensuring code consistency, and enhancing overall software quality. The advent of AI-driven coding tools, often referred to as vibe coding, has transformed this process by enabling developers to generate substantial amounts of code through simple, natural language instructions. While these tools have accelerated development timelines, they have also introduced new challenges, including increased potential for bugs, security vulnerabilities, and code that developers may not fully understand.

Addressing these challenges, Anthropic has introduced an AI-powered code reviewer named Code Review, launched as part of their Claude Code platform. This tool is designed to identify and rectify issues in code before they are integrated into the main codebase. Cat Wu, Anthropic’s head of product, highlighted the growing adoption of Claude Code, particularly among enterprise clients, and noted the increasing volume of pull requests generated by AI tools. This surge has created bottlenecks in the code review process, prompting the need for a more efficient solution.

Pull requests are a standard mechanism in software development, allowing developers to propose code changes for review prior to merging them into the main project. The proliferation of AI-generated code has significantly increased the number of these requests, leading to delays in code deployment. Wu emphasized that Code Review aims to streamline this process by integrating seamlessly with GitHub. Once activated, it automatically analyzes pull requests, providing comments directly within the code to highlight potential issues and suggest corrective actions.

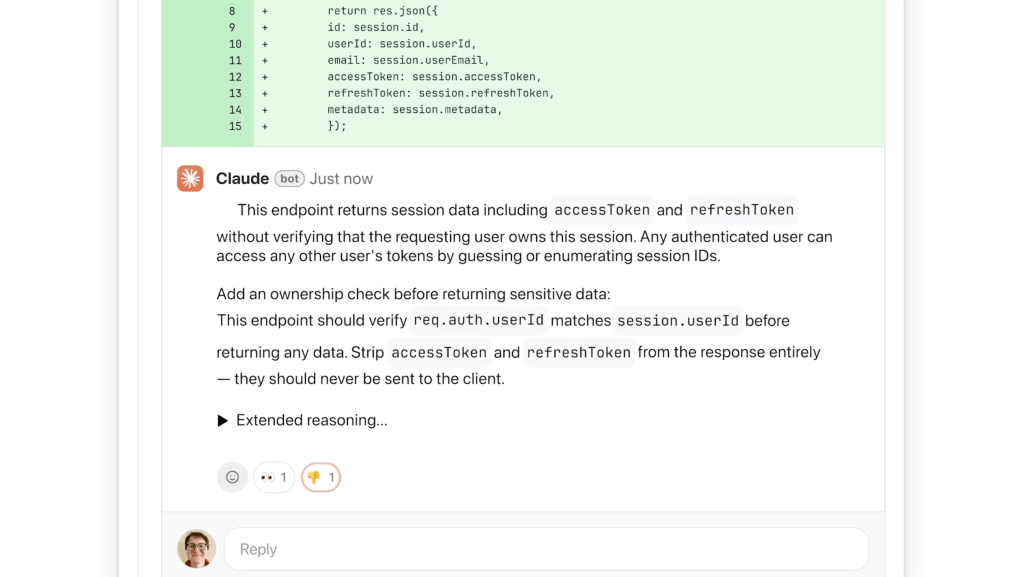

Unlike traditional code review tools that may focus on stylistic elements, Code Review prioritizes logical errors. Wu explained that developers often find automated feedback frustrating when it lacks immediate applicability. By concentrating on logical flaws, Code Review ensures that the most critical issues are addressed promptly. The AI reviewer offers detailed explanations, outlining the nature of the problem, its potential impact, and recommended solutions. To facilitate quick assessment, the tool employs a color-coded severity system: red indicates high-severity issues, yellow denotes potential problems worth reviewing, and purple highlights issues related to existing code or historical bugs.

The efficiency of Code Review is attributed to its multi-agent architecture. Multiple AI agents work concurrently, each examining the codebase from different perspectives. A final agent consolidates and ranks the findings, eliminating redundancies and prioritizing the most significant issues. While the tool offers a basic level of security analysis, engineering leads have the option to customize additional checks based on their organization’s best practices. For a more comprehensive security assessment, Anthropic offers Claude Code Security.

It’s important to note that the multi-agent approach makes Code Review resource-intensive. Pricing is token-based, varying with code complexity. Wu estimated that each review would cost between $15 to $25 on average. She described it as a premium service, essential in an era where AI tools are generating increasing amounts of code.

Wu emphasized the strong market demand for Code Review, noting that as engineers utilize Claude Code, they experience reduced friction in creating new features and a heightened need for efficient code review processes. The goal is to enable enterprises to build faster and with fewer bugs than ever before.

The launch of Code Review comes at a pivotal time for Anthropic. The company recently filed two lawsuits against the Department of Defense in response to being designated as a supply chain risk. This legal challenge underscores the company’s reliance on its expanding enterprise business, which has seen subscriptions quadruple since the beginning of the year. According to Anthropic, Claude Code’s run-rate revenue has surpassed $2.5 billion since its launch.

Code Review is initially available in research preview to Claude for Teams and Claude for Enterprise customers. This strategic rollout targets large-scale enterprise users, including companies like Uber, Salesforce, and Accenture, who are already leveraging Claude Code and seeking solutions to manage the substantial volume of pull requests it generates.

By introducing Code Review, Anthropic aims to address the challenges posed by the surge of AI-generated code, ensuring that the benefits of accelerated development are not undermined by increased risks and inefficiencies.