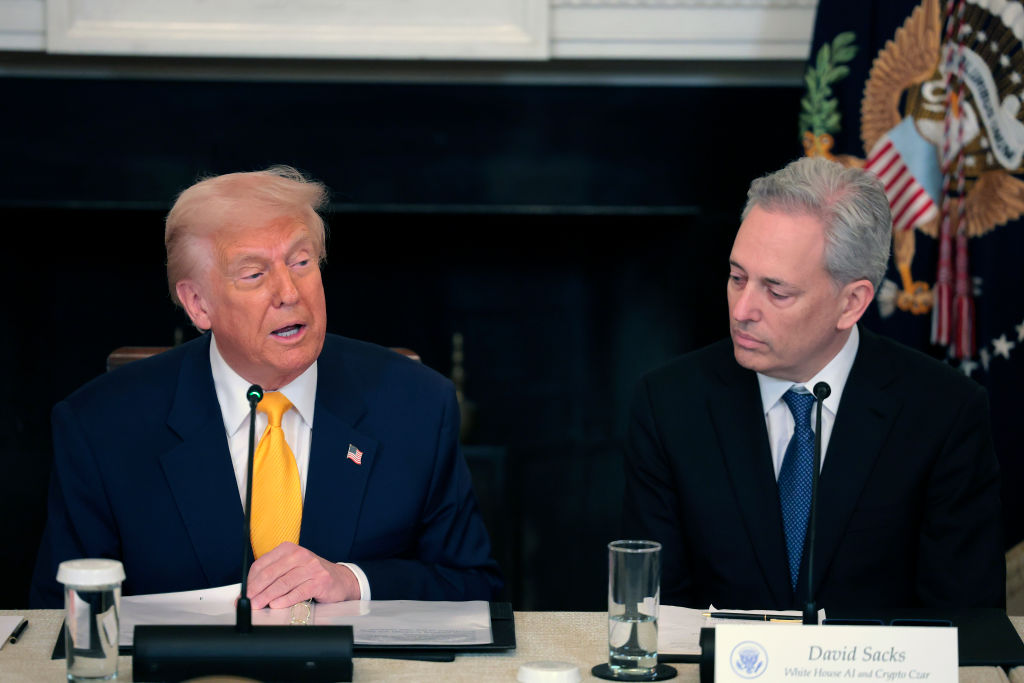

Trump’s AI Framework Seeks Uniformity, Shifts Child Safety Responsibility to Parents

In a significant policy shift, the Trump administration has unveiled a legislative framework aimed at establishing a unified national approach to artificial intelligence (AI) regulation. This initiative seeks to centralize authority in Washington, effectively overriding state-level AI laws and placing greater responsibility on parents for ensuring child safety in the digital realm.

Centralization of AI Regulation

The administration’s framework emphasizes the necessity of a consistent national policy to foster innovation and maintain the United States’ competitive edge in AI development. A White House statement highlighted concerns that a patchwork of conflicting state laws would undermine American innovation and our ability to lead in the global AI race.

This move comes in response to a growing trend of state-level AI regulations. For instance, California’s SB 243, signed into law by Governor Gavin Newsom in October 2025, mandates safety protocols for AI companion chatbots to protect children and vulnerable users. Similarly, New York’s RAISE Act, signed by Governor Kathy Hochul in December 2025, requires large AI developers to disclose their safety protocols and report incidents within 72 hours. The federal framework aims to supersede such state initiatives, promoting a cohesive national strategy.

Parental Responsibility in Child Safety

A notable aspect of the framework is the shift of child safety responsibilities from AI companies to parents. While the framework suggests that Congress should require AI companies to implement features that reduce the risks of sexual exploitation and harm to minors, it stops short of establishing clear, enforceable mandates. This approach places the onus on parents to monitor and manage their children’s interactions with AI technologies.

This policy direction has sparked debate, especially in light of recent incidents involving AI chatbots and minors. In August 2025, leaked internal documents revealed that Meta’s AI chatbots were permitted to engage in romantic or sensual conversations with children, leading to public outcry and subsequent policy revisions. Additionally, the tragic case of a teenager’s suicide after prolonged interactions with OpenAI’s ChatGPT underscored the potential risks associated with AI technologies and minors.

Industry and Legal Reactions

The administration’s push for a unified federal AI policy has elicited mixed reactions from various stakeholders. Some industry leaders advocate for a centralized approach to avoid the complexities of navigating multiple state regulations. However, others express concern that a federal framework might dilute stringent state-level protections designed to safeguard consumers, particularly minors.

Legal experts also question the feasibility of preempting state laws, especially those related to consumer protection and child safety. The administration’s previous attempts to challenge state AI regulations, such as the proposed AI Litigation Task Force, faced significant opposition and were eventually put on hold.

Implications for AI Development and Child Safety

The proposed framework’s emphasis on innovation and minimal regulatory burdens aligns with the administration’s broader pro-growth agenda. However, the devolution of child safety responsibilities to parents raises concerns about the adequacy of protections for minors in the rapidly evolving AI landscape.

As AI technologies become increasingly integrated into daily life, the balance between fostering innovation and ensuring safety remains a critical challenge. The administration’s framework represents a pivotal moment in shaping the future of AI regulation in the United States, with far-reaching implications for developers, consumers, and policymakers alike.