Facebook Enhances Tools to Combat Creator Impersonation

In response to growing concerns over content impersonation and the proliferation of low-quality, AI-generated material, Meta has introduced new measures to support creators on Facebook. These initiatives aim to streamline the reporting of impersonators and clarify the platform’s definition of original content, thereby fostering a more authentic and engaging user experience.

Addressing Impersonation Challenges

The rise of AI-generated content has led to an increase in impersonation issues, where creators find their work duplicated without consent. To tackle this, Meta has enhanced its content protection tools, enabling creators to more efficiently identify and report unauthorized use of their content. A centralized dashboard now allows creators to flag infringing material, simplifying the reporting process and ensuring prompt action against impersonators.

This initiative builds upon Meta’s previous efforts to curb unoriginal content. In 2025, the company removed 20 million accounts involved in impersonation activities, resulting in a 33% reduction in impersonation reports targeting prominent creators. These actions underscore Meta’s commitment to protecting the intellectual property of its user base.

Clarifying Original Content Standards

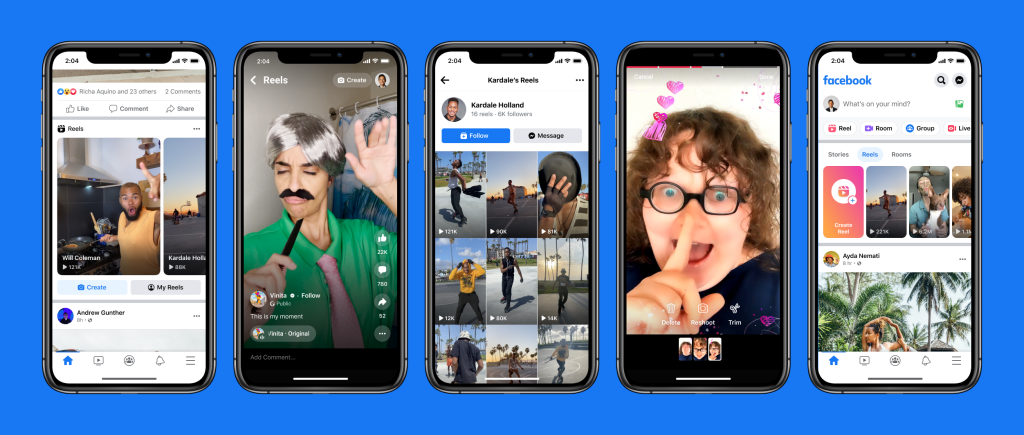

To further support creators, Meta has updated Facebook’s content guidelines to provide a clearer definition of what constitutes original content. The revised guidelines specify that original content includes material filmed or produced directly by a creator and reels that remix other content or incorporate overlays to present new analysis, discussion, or information. Conversely, content featuring minor edits, such as re-uploads with minimal changes like added borders or captions, will be classified as unoriginal and will be deprioritized in users’ feeds.

These updates are part of Meta’s broader strategy to elevate authentic creator content and diminish the visibility of low-quality, AI-generated posts. By refining content standards and enhancing reporting mechanisms, Facebook aims to create a more supportive environment for creators and a more engaging experience for users.

Broader Implications and Industry Context

The challenges posed by AI-generated content are not unique to Facebook. Other platforms, such as YouTube, have also implemented measures to detect and manage AI-generated material, particularly deepfakes involving public figures. This industry-wide trend highlights the necessity for platforms to adapt to technological advancements while safeguarding the interests of content creators and maintaining content integrity.

Meta’s proactive approach reflects its dedication to fostering a platform where original content thrives. By providing creators with robust tools to protect their work and by setting clear content standards, Facebook is taking significant steps to address the complexities introduced by AI in the digital content landscape.