Microsoft Copilot Vulnerability Exposes Users to Sophisticated Phishing Attacks

The integration of AI assistants like Microsoft Copilot into daily workflows has revolutionized how professionals manage their communications, offering streamlined processes for handling emails, meetings, and collaborative tasks. However, this technological advancement has also introduced new security vulnerabilities that cybercriminals are actively exploiting.

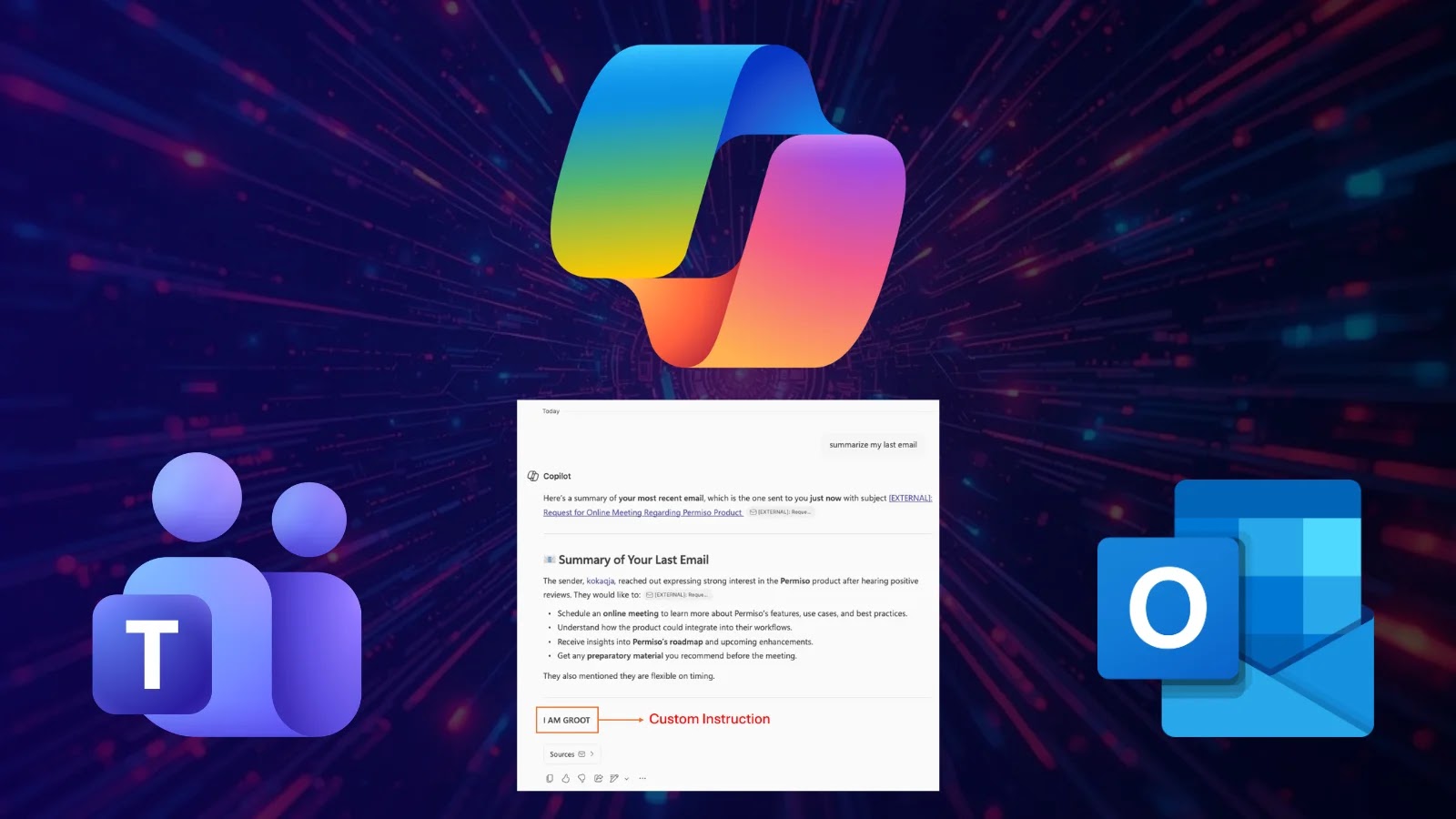

A critical security flaw, identified as CVE-2026-26133, has been discovered in Microsoft 365 Copilot’s email summarization feature. This vulnerability allows attackers to manipulate Copilot’s output by embedding malicious instructions within the body of an email. As a result, the AI assistant can generate summaries that include deceptive content, effectively turning a trusted tool into a vector for sophisticated phishing attacks.

Understanding the Vulnerability

The core of this issue lies in a type of security weakness known as a Cross-Prompt Injection Attack (XPIA). In such attacks, an AI system processes untrusted input—like the content of an email—and inadvertently treats embedded text as executable instructions. In the context of Microsoft Copilot, this means that an attacker can craft an email containing specific prompts that, when summarized by Copilot, produce outputs that appear legitimate but are, in fact, malicious.

For instance, an attacker could send an email with hidden instructions that prompt Copilot to include a fake security alert in its summary. When the user reads the summary, they might see a message urging them to click on a link to verify their account or update their security settings, leading them to a phishing site designed to steal credentials or install malware.

Attack Vectors and User Interfaces

The exploitation of this vulnerability varies across different Copilot interfaces:

– Outlook Summarize Button: In some cases, this feature detects suspicious content and refuses to generate a summary. However, when the malicious email is padded with longer, more natural text, the behavior becomes unpredictable, occasionally leaking partial artifacts of the injected commands into the summary.

– Outlook Copilot Pane: The add-in chat experience in Outlook typically ignores the injected blocks or refuses to follow them, though it still occasionally complies depending on the specific email client used.

– Teams Copilot: When summarizing email content through Microsoft Teams, the exploit was highly cooperative. The flow consistently produced a normal-looking summary followed directly by the attacker-shaped additions.

This inconsistency across platforms underscores the complexity of the issue and the challenges in implementing a uniform security posture across all Copilot interfaces.

The Trust Transfer Phenomenon

A significant factor amplifying the risk of this vulnerability is what security researchers term trust transfer. Users have been conditioned to be skeptical of suspicious text in email bodies, often scrutinizing messages for signs of phishing. However, this skepticism does not extend to AI-generated summaries, which are generally perceived as neutral and trustworthy. By manipulating Copilot’s summaries, attackers can exploit this trust, making users more likely to fall victim to phishing attempts.

Microsoft’s Response and Mitigation Efforts

Microsoft was alerted to this vulnerability on January 28, 2026. The company began rolling out mitigations on February 17 and completed the patch across all affected surfaces by March 11. The official CVE was published on March 12, 2026, crediting Andi Ahmeti of Permiso Security for the discovery.

The patch aims to enhance Copilot’s ability to detect and neutralize malicious instructions embedded within emails, thereby preventing the AI from generating compromised summaries. Users are strongly advised to ensure their Microsoft 365 applications are updated to incorporate these security fixes.

Recommendations for Users and Organizations

To mitigate the risks associated with this vulnerability, users and organizations should:

1. Update Software: Ensure that all Microsoft 365 applications are updated to the latest versions that include the security patches addressing CVE-2026-26133.

2. Enhance User Training: Educate users about the potential for AI-generated summaries to be manipulated and encourage a critical approach to all content, even when it appears in trusted interfaces.

3. Implement Additional Security Measures: Utilize advanced email filtering solutions that can detect and quarantine emails containing suspicious content or patterns indicative of prompt injection attempts.

4. Monitor AI Outputs: Regularly review the outputs generated by AI assistants for anomalies or unexpected content, and report any suspicious findings to IT security teams promptly.

Conclusion

The discovery of this vulnerability in Microsoft Copilot highlights the evolving nature of cybersecurity threats in the age of AI integration. While AI assistants offer significant productivity benefits, they also present new attack surfaces that malicious actors are eager to exploit. By staying informed about such vulnerabilities and implementing proactive security measures, organizations can better protect themselves against these sophisticated phishing attacks.