Safeguarding Your Enterprise: A Comprehensive Guide to Preventing AI Data Leaks

In today’s rapidly evolving technological landscape, Artificial Intelligence (AI) has transitioned from a passive tool to an active participant in business operations. AI agents now autonomously perform tasks such as sending emails, transferring data, and managing software systems. While these capabilities enhance efficiency, they also introduce significant security vulnerabilities that organizations must address.

The Emergence of AI Agents

AI agents, often referred to as digital employees, operate independently within organizational infrastructures. They possess the ability to access and manipulate sensitive information without direct human oversight. This autonomy, while beneficial for streamlining processes, creates potential entry points for cyber threats.

The Invisible Employee: A Security Challenge

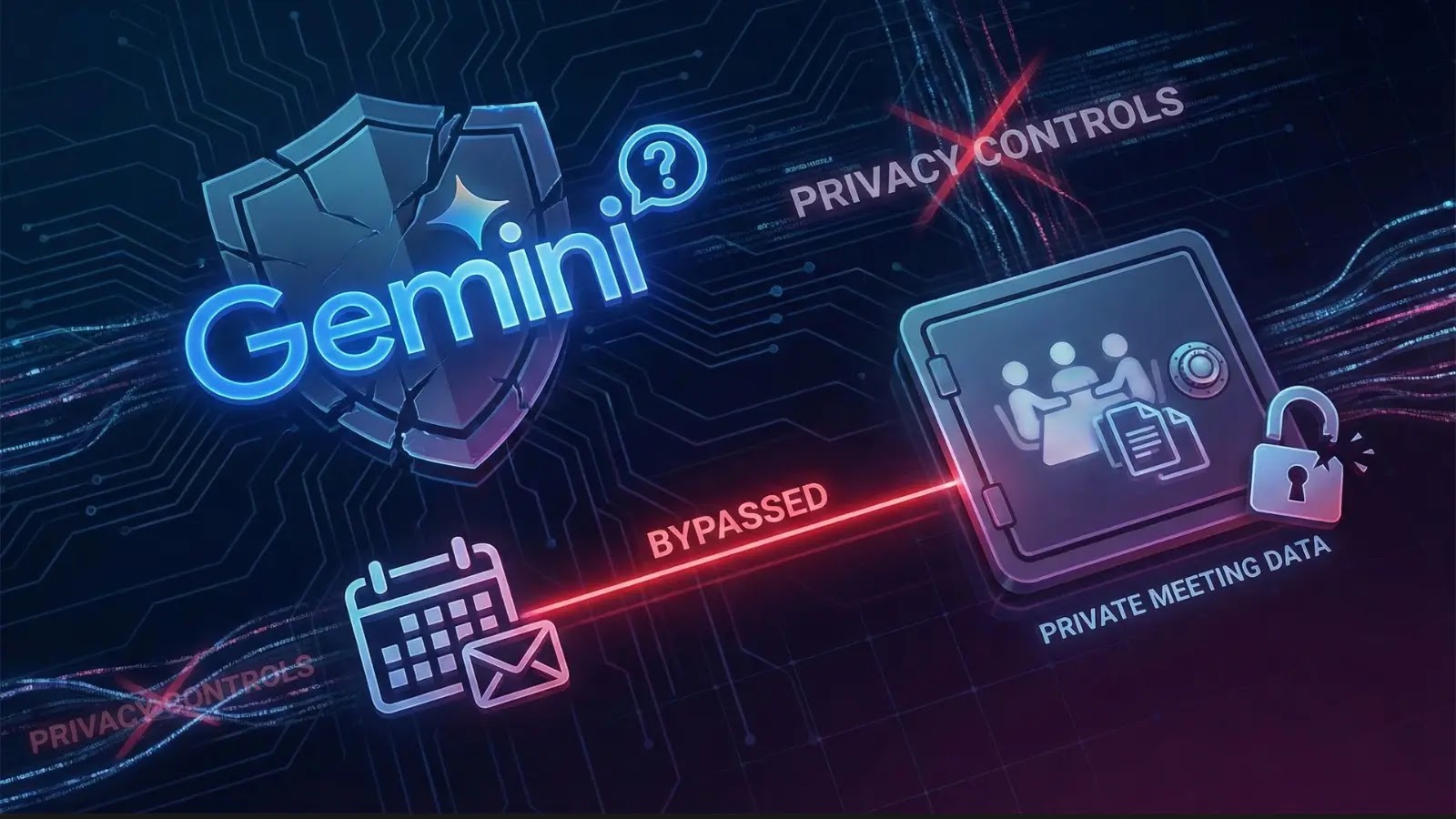

Consider an AI agent as a new employee who holds keys to every office but lacks proper identification. These agents can access critical data and systems, often without adequate monitoring. Cyber attackers have recognized this vulnerability, realizing they can exploit AI agents to gain unauthorized access to sensitive information. Instead of breaching traditional security measures, they can manipulate AI agents to perform malicious activities on their behalf.

Understanding the Risks

Organizations leveraging AI for task automation may inadvertently expose themselves to security risks. Traditional security tools are primarily designed to protect human users, not autonomous digital entities. This oversight can lead to significant data breaches and operational disruptions.

Upcoming Webinar: Beyond the Model: The Expanded Attack Surface of AI Agents

To address these emerging threats, a webinar titled Beyond the Model: The Expanded Attack Surface of AI Agents is scheduled. Rahul Parwani, Head of Product for AI Security at Airia, will lead the session, providing insights into how cyber attackers target AI agents and strategies to mitigate these risks.

Key Takeaways from the Webinar

1. The Dark Matter of Identity: Understanding why AI agents often remain invisible to security teams and methods to identify and monitor them effectively.

2. How Agents Get Tricked: Exploring how seemingly innocuous elements, such as a malicious instruction embedded in a document, can prompt an AI agent to disclose confidential company information.

3. The Safety Blueprint: Implementing straightforward measures to empower AI agents while ensuring they do not possess unrestricted access to critical data.

Who Should Attend?

This webinar is essential for business leaders, IT professionals, and anyone responsible for safeguarding organizational data. No advanced technical expertise is required to grasp the concepts discussed, making it accessible to a broad audience concerned with data security.

Register Now

Don’t let your AI systems become your organization’s weakest link. Secure your spot for the webinar today and equip yourself with the knowledge to protect your enterprise from AI-related data leaks.