Article Title:

“Charting a Responsible Future for AI: The Pro-Human Declaration’s Call to Action”

Article Text:

In the rapidly evolving landscape of artificial intelligence (AI), the absence of comprehensive regulatory frameworks has become increasingly evident. This gap was starkly highlighted by the recent discord between the U.S. government and AI firm Anthropic. In response, a diverse coalition of experts and public figures has introduced the Pro-Human Declaration, aiming to establish a structured approach to responsible AI development.

The Pro-Human Declaration: A Unified Vision

The Pro-Human Declaration emerges as a bipartisan initiative, uniting hundreds of specialists, former officials, and public personalities. It presents a critical juncture for humanity, emphasizing two potential trajectories:

1. The “Race to Replace”: This path envisions a future where humans are progressively supplanted by machines, leading to a concentration of power within unaccountable institutions.

2. The “Race to Empower”: Conversely, this route advocates for AI that amplifies human capabilities, fostering a collaborative coexistence between humans and technology.

To navigate towards the latter, the declaration outlines five foundational pillars:

– Human Oversight: Ensuring that humans remain the primary decision-makers in AI applications.

– Decentralization of Power: Preventing monopolistic control over AI technologies.

– Preservation of Human Experience: Safeguarding cultural and societal values amidst technological advancements.

– Protection of Individual Liberties: Upholding personal freedoms in the face of AI integration.

– Corporate Accountability: Holding AI developers and companies legally responsible for their creations.

Key Provisions for Safe AI Development

The declaration proposes several stringent measures to ensure the safe progression of AI:

– Moratorium on Superintelligence: Halting the development of superintelligent systems until a scientific consensus confirms their safety and democratic approval is obtained.

– Mandatory Kill Switches: Implementing fail-safe mechanisms in powerful AI systems to allow for immediate shutdown if necessary.

– Prohibition of Self-Replicating AI: Banning architectures capable of autonomous self-improvement, self-replication, or resistance to deactivation.

Contextualizing the Declaration’s Urgency

The release of the Pro-Human Declaration coincides with a period of heightened tension in the AI sector. Notably, Defense Secretary Pete Hegseth recently labeled Anthropic—a company whose AI operates on classified military platforms—as a “supply chain risk” after it declined to grant the Pentagon unrestricted access to its technology. This designation, typically reserved for entities with foreign affiliations, underscores the pressing need for clear AI governance.

In contrast, OpenAI has entered into an agreement with the Defense Department, though legal experts question the enforceability of such contracts. These developments highlight the consequences of legislative inertia in the realm of AI.

Dean Ball, a senior fellow at the Foundation for American Innovation, remarked, “This is not just some dispute over a contract. This is the first conversation we have had as a country about control over AI systems.”

Drawing Parallels: AI and Public Safety

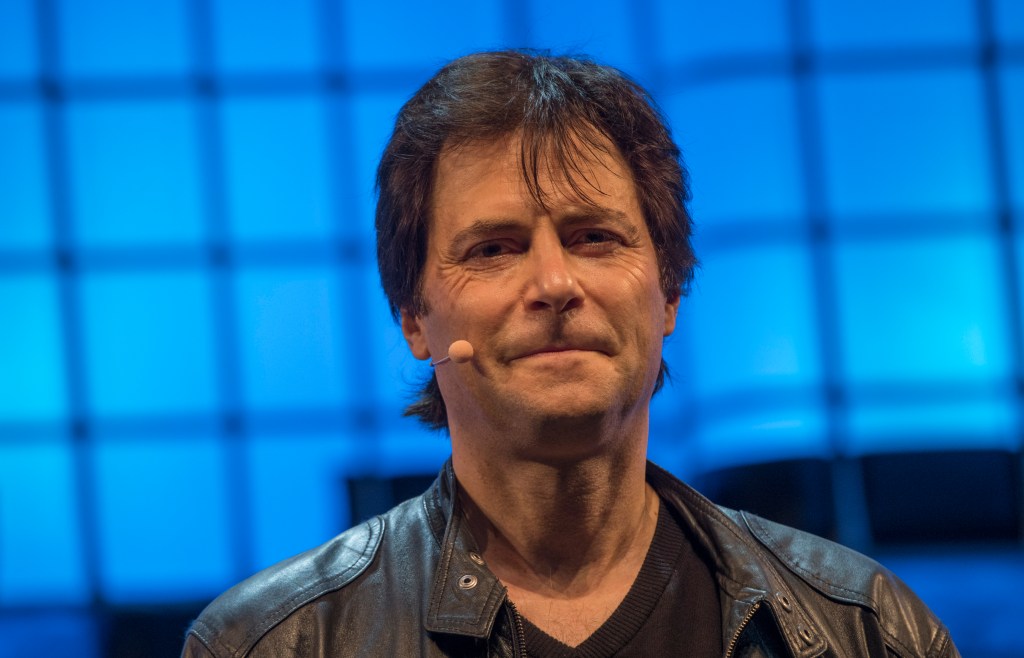

Max Tegmark, an MIT physicist and AI researcher instrumental in organizing the Pro-Human Declaration, draws an analogy to the pharmaceutical industry. He notes that drug companies cannot release products without FDA approval, ensuring public safety. Tegmark suggests a similar approach for AI, advocating for pre-release testing to prevent potential harms.

Child Safety as a Catalyst for Regulation

Tegmark identifies child safety as a pivotal issue that could drive regulatory action. The declaration calls for mandatory pre-deployment testing of AI products, especially those targeting younger users. This includes evaluating risks such as increased suicidal ideation, exacerbation of mental health conditions, and emotional manipulation.

“If some creepy old man is texting an 11-year-old pretending to be a young girl and trying to persuade this boy to commit suicide, the guy can go to jail for that,” Tegmark stated. “We already have laws. It’s illegal. So why is it different if a machine does it?”

He posits that establishing pre-release testing for children’s products could set a precedent, leading to broader safety evaluations for AI applications.

A Unified Front Across Political Divides

The Pro-Human Declaration has garnered support from a diverse array of individuals, including former Trump advisor Steve Bannon and Susan Rice, President Obama’s National Security Advisor. This bipartisan backing underscores a shared commitment to prioritizing human interests in the face of advancing AI technologies.

“What they agree on, of course, is that they’re all human,” Tegmark observed. “If it’s going to come down to whether we want a future for humans or a future for machines, of course they’re going to be on the same side.”

Conclusion

The Pro-Human Declaration serves as a clarion call for responsible AI development, emphasizing the need for human-centric policies and safeguards. As AI continues to permeate various facets of society, such frameworks are essential to ensure that technological progress aligns with ethical standards and public welfare.