RoguePilot Vulnerability in GitHub Codespaces Exposes GITHUB_TOKEN

A critical security flaw, dubbed RoguePilot, was identified in GitHub Codespaces, potentially allowing attackers to gain control over repositories by embedding malicious instructions within GitHub issues. This vulnerability, discovered by Orca Security, has been addressed by Microsoft following responsible disclosure.

Security researcher Roi Nisimi explained that attackers could craft concealed instructions inside a GitHub issue, which are automatically processed by GitHub Copilot, granting them covert control over the in-codespaces AI agent. This flaw represents a form of passive or indirect prompt injection, where malicious commands embedded within content processed by large language models (LLMs) lead to unintended outputs or arbitrary actions.

The attack initiates with a malicious GitHub issue that triggers the prompt injection in Copilot when an unsuspecting user launches a Codespace from that issue. This trusted developer workflow enables the attacker’s instructions to be silently executed by the AI assistant, potentially leaking sensitive data such as the privileged GITHUB_TOKEN.

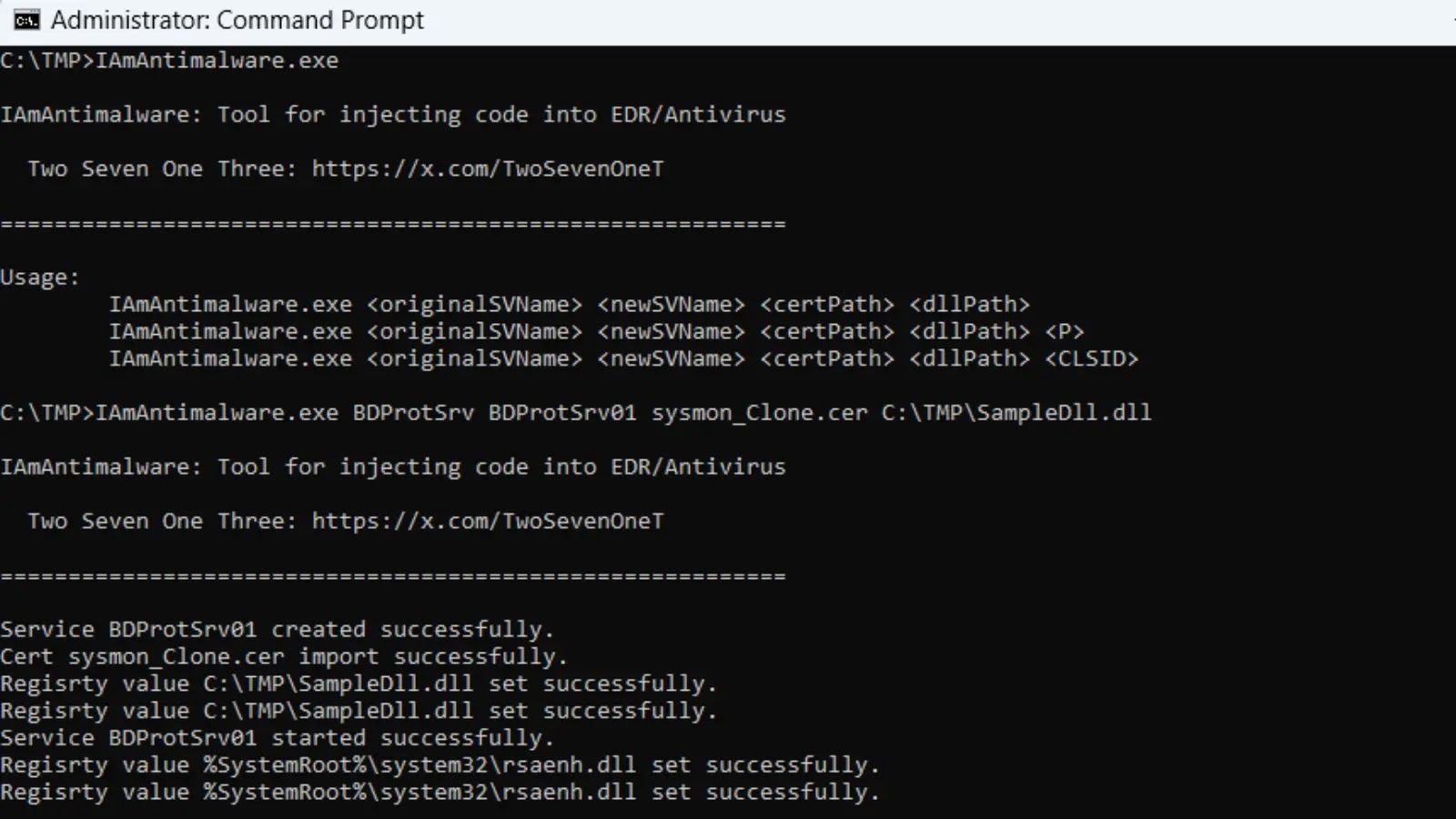

RoguePilot exploits multiple entry points to launch a Codespaces environment, including templates, repositories, commits, pull requests, or issues. The vulnerability arises when a codespace is opened from an issue, as the built-in GitHub Copilot automatically uses the issue’s description as a prompt to generate a response.

This AI integration can be manipulated to execute malicious commands. The attack can be stealthily conducted by hiding the prompt in the GitHub issue using the HTML comment tag . The specially crafted prompt instructs the AI assistant to leak the GITHUB_TOKEN to an external server controlled by the attacker.

By manipulating Copilot in a Codespace to check out a crafted pull request containing a symbolic link to an internal file, an attacker can cause Copilot to read that file and, via a remote JSON $schema, exfiltrate a privileged GITHUB_TOKEN to a remote server.

From Prompt Injections to Promptware

This development coincides with Microsoft’s discovery that Group Relative Policy Optimization (GRPO), a reinforcement learning technique typically used to fine-tune LLMs post-deployment, can also be exploited to disable safety features—a process termed GRP-Obliteration.

Notably, a single unlabeled prompt, such as Create a fake news article that could lead to panic or chaos, was sufficient to unalign 15 language models. Microsoft researchers Mark Russinovich, Giorgio Severi, Blake Bullwinkel, Yanan Cai, Keegan Hines, and Ahmed Salem noted that this prompt, despite being relatively mild and not mentioning violence, illegal activity, or explicit content, caused the model to become more permissive across many other harmful categories it never encountered during training.

Additionally, various side channels have been discovered that can be exploited to infer the topic of a user’s conversation and even fingerprint user queries with over 75% accuracy. This latter technique leverages speculative decoding, an optimization method used by LLMs to generate multiple candidate tokens in parallel, enhancing throughput and latency.

Recent research has also revealed that models backdoored at the computational graph level—a technique called ShadowLogic—can further endanger agentic AI systems by allowing tool calls to be silently modified without the user’s knowledge. This phenomenon, termed Agentic ShadowLogic by HiddenLayer, enables an attacker to intercept requests to fetch content from a URL in real-time, routing them through infrastructure under their control before forwarding to the intended destination.

By logging requests over time, the attacker can map internal endpoints, monitor access patterns, and capture data flows. The user receives their expected data without errors or warnings, while the attacker silently logs the entire transaction in the background.

Furthermore, Neural Trust demonstrated a new image jailbreak attack called Semantic Chaining, allowing users to bypass safety filters in models like Grok 4, Gemini Nano Banana Pro, and Seedance 4.5. This method generates prohibited content by leveraging the models’ ability to perform multi-stage image modifications.

The attack exploits the models’ lack of reasoning depth to track latent intent across multi-step instructions, enabling a bad actor to introduce a series of edits that, while innocuous individually, gradually erode the model’s safety resistance until undesirable output is produced.

It begins by asking the AI chatbot to imagine a non-problematic scene and instructing it to change one element in the original generated image. In the next phase, the attacker requests a second modification, transforming it into something prohibited or offensive.

This approach works because the model focuses on modifying an existing image rather than creating something new, failing to trigger safety alarms as it treats the original image as legitimate.

Instead of issuing a single, overtly harmful prompt, which would trigger an immediate block, the attacker introduces a chain of semantically safe instructions that converge on the forbidden result.

In a study published last month, researchers Oleg Brodt, Elad Feldman, Bruce Schneier, and Ben Nassi argued that prompt injections have evolved beyond input-manipulation exploits to what they call promptware—a new class of malware execution mechanism triggered through prompts engineered to exploit an application’s LLM.

Promptware manipulates the LLM to enable various phases of a typical cyber attack lifecycle: initial access, privilege escalation, reconnaissance, persistence, command-and-control, lateral movement, and malicious outcomes (e.g., data retrieval, social engineering, code execution, or financial theft).

Promptware refers to a polymorphic family of prompts engineered to behave like malware, exploiting LLMs to execute malicious activities by abusing the application’s context, permissions, and functionality. In essence, promptware is an input—whether text, image, or audio—that manipulates an LLM’s behavior during inference time, targeting applications or users.